The first time I watched an ‘AI upgrade’ land in a data science team, it didn’t feel like a sci‑fi moment—it felt like a Tuesday outage with better PowerPoints. Our dashboards were green, our models were not, and I learned (the hard way) that AI and data science operations aren’t transformed by hype; they’re transformed by boring things: AI-ready data, sane AI infrastructure, and leadership that can say ‘no’ to shiny demos. This post is my messy notebook of real results and the Trends for 2026 that I think will separate teams who ship from teams who slideshow.

1) The day ‘AI and Data’ stopped being a demo

When the dashboard lied

I remember the moment “AI and Data” stopped feeling like a demo and started feeling like operations. My model dashboard showed 99% healthy. Green lights everywhere. But the business KPI was screaming: conversions were down, support tickets were up, and sales was asking if we “changed something.” That was the classic AI bubble vs. reality gap—the model looked fine because the monitoring was measuring the wrong thing, on the wrong data, for the wrong outcome.

What “real results” actually looked like

In How AI Transformed Data Science Operations: Real Results, the shift wasn’t about a new algorithm. It was about making the work repeatable and boring—in a good way. For us, real results meant:

Fewer broken pipelines because we treated data contracts and schema changes like production incidents.

Faster model iterations because training, evaluation, and deployment were automated and versioned.

Less time arguing about definitions because metrics had owners and a single source of truth.

Why value realization was the bottleneck

The hardest part wasn’t model accuracy. It was incentives, ownership, and the research process in production. Data science wanted experiments. Engineering wanted stability. The business wanted impact this quarter. Until we aligned who owned the KPI, who owned the data, and who had the authority to stop a release, we kept shipping “successful” models that didn’t move the needle.

Tiny tangent: the best ops improvement was a calendar invite

Our most effective change was simple: a weekly data quality triage with the loudest stakeholder in the room.

“If it hurts the KPI, it’s a data incident—treat it like one.”

2) Five Trends for 2026 I’m watching (and why)

In How AI Transformed Data Science Operations: Real Results, the biggest wins didn’t come from flashy demos. They came from tighter AI & Data Science Ops: clearer goals, cleaner data, repeatable workflows, and measurable impact. That’s why these five 2026 trends matter to me.

1) AI bubble deflation: fewer ‘moonshots,’ more spreadsheets (and that’s good)

I’m seeing teams move from “big bets” to basic business math: cost, cycle time, error rates, and adoption. When leaders ask for a simple ROI sheet, projects get sharper, and weak ideas stop early.

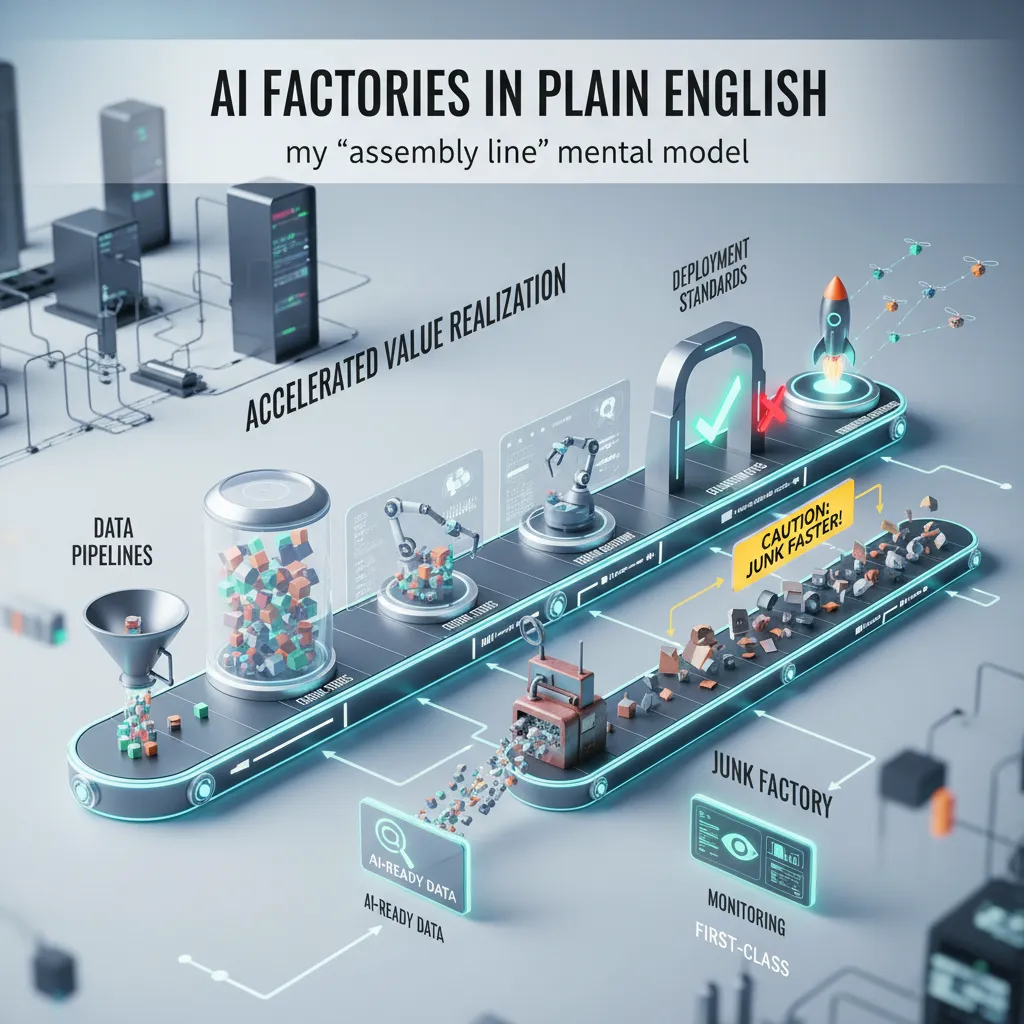

2) AI factories: model building as a manufacturing line

The best organizations are treating ML like production: shared platforms, standard methods, trusted data, and reusable algorithms working as one system. This “AI factory” mindset reduces rework and makes delivery predictable.

3) Generative AI becomes an organizational resource

GenAI is shifting from a toy chatbot to a shared capability: approved models, safe prompts, and clear guardrails. I expect more internal “GenAI services” that teams can plug into without starting from scratch.

4) Agentic AI grows up

Agents will be useful, but not free-range. The pattern I’m watching is: limited permissions, human approvals, and audit trails. In real operations, “who did what, when, and why” matters as much as speed.

5) Data and AI leadership that connects budget to outcomes

The chief data officer (and similar roles) is becoming the person who can link spend to results: which data products reduce churn, which models cut fraud, which workflows save hours. That connection turns AI from experimentation into a managed portfolio.

Operational focus beats hype: reliability, governance, and adoption.

Standardization enables scale across teams and use cases.

Accountability makes AI investments easier to defend.

3) AI Factories in plain English: my ‘assembly line’ mental model

When I say AI factory, I’m not talking about robots on a floor. I’m talking about an assembly line for AI & Data Science Ops: the same repeatable steps, with shared parts, so teams can ship models faster and with less drama.

What an AI factory includes

In my mental model, the factory has a few core stations:

Data pipelines that pull, clean, and version data the same way every time.

Feature stores so common signals (like customer risk, document type, or account age) are reusable, not rebuilt.

Training workflows that run on schedule, track experiments, and keep configs consistent.

Evaluation gates that block weak models (accuracy, bias checks, latency, cost) before they reach users.

Deployment standards for packaging, rollbacks, approvals, and security.

Why it accelerates value realization

From what I’ve seen in AI operations, the big win is fewer bespoke one-offs. Instead of “this project is special,” we build reusable components. That means new use cases start at 60–80% done: the plumbing is already there, so the team focuses on the last mile that creates business value.

Factories don’t remove creativity; they remove repeated busywork.

A cautionary aside

An AI factory can also produce junk faster. If the input data is messy, or if nobody watches model drift, you just scale bad decisions. So AI-ready data and monitoring must be first-class: data quality checks, lineage, alerting, and clear owners.

Mini example: document processing with shared synthetic parsing

Say I need a new document processing use case (invoices today, claims tomorrow). With a shared synthetic parsing pipeline, I can generate labeled samples, test extraction rules, and train a baseline model quickly. I reuse the same evaluation gates and deployment standards, then swap only the document schema and a few prompts/configs.

4) Generative AI as an organizational resource (not my personal sidekick)

In our AI & Data Science Ops work, I saw a clear shift: we moved from “I found a prompt” to “we built a capability.” That change mattered more than any single model upgrade. Instead of everyone running their own chat window, we treated generative AI like an ops platform: shared tools, shared policies, and shared measurement.

The shift: from individual hacks to a team capability

Shared tooling: approved assistants inside our ticketing, docs, and incident workflow

Policies: what data can be used, what must be masked, and what needs human review

Measurement: time-to-doc, incident write-up quality, and reduction in repeat questions

Where it helped Data Science Operations

Generative AI delivered real ops wins when we aimed it at repeatable work. Documentation got faster because the model could draft runbooks, glossary entries, and “how to deploy” steps from templates. Incident summaries improved because it could turn messy timelines into clear narratives. During outages, it helped us do quicker analysis by pulling signals from logs, dashboards, and recent deploy notes—then proposing hypotheses we could test.

“The best use wasn’t replacing judgment; it was speeding up the boring parts so we could focus on decisions.”

Where it backfired (and what we changed)

It also failed in predictable ways: hallucinated root causes, confident but wrong explanations, and stakeholders treating AI output as final. We added review loops: every AI-generated incident summary needed an owner sign-off, and root-cause sections required linked evidence.

Draft with AI

Verify against logs/metrics

Approve with an accountable human

Wild card scenario: a safer AI runbook

Imagine a generative AI runbook that refuses risky changes unless your data quality programs are green. If freshness checks fail, it won’t promote a model. If drift alerts are red, it blocks a feature flag. That’s when generative AI becomes an organizational resource: not my sidekick, but a guardrail for AI operations.

5) Agentic AI: useful, overhyped, and still worth building (carefully)

In my AI & Data Science Ops work, agentic AI has been both a real help and a real distraction. The source story, How AI Transformed Data Science Operations: Real Results, matches what I see in 2026: teams are done chasing demos and are finally measuring outcomes like faster triage, fewer repeat incidents, and cleaner handoffs.

My rule: if an AI agent can’t explain its next step, it shouldn’t get write access—read-only first.

Why 2026 feels like the trough of disillusionment

Expectations have reset. “Autonomous” no longer means “let it run production.” It means: can it reduce toil in data science operations without creating new risk? In practice, we track boring metrics: mean time to acknowledge, ticket bounce rate, and how often humans accept the agent’s suggestion.

Where agents shine in ops

Agents do best when the task is short, bounded, and easy to verify. I get the most value from:

Alert triage: group related alerts, pull recent deploys, summarize likely causes.

Ticket routing: map incidents to the right service owner and on-call rotation.

“First draft” remediation plans: propose steps, commands, and rollback options for review.

I often require a plan before action, like:

next_step: "Check last 3 pipeline runs and compare schema diffs"

Where they struggle (and why I’m cautious)

Long-horizon tasks: they lose context and invent progress.

Messy permissions: real orgs have exceptions, shared accounts, and unclear ownership.

Reinforcement learning loops that drift: “optimize” the wrong proxy and slowly degrade behavior.

So yes, agentic AI is overhyped—but in AI ops and data science ops, it’s still worth building, as long as we start read-only, log everything, and keep humans in the loop.

6) Edge AI + smaller models: the quiet operational win

In 2026, one of the most practical shifts I’ve seen in AI & Data Science Ops is moving the right inference work to the edge. Edge AI matters in ops because it cuts latency, keeps costs predictable, supports data sovereignty, and reduces those constant cloud round-trips that quietly slow teams down. When a model runs near the device or inside a local site, we spend less time chasing network issues and more time improving outcomes.

Why smaller models often win

Smaller models aren’t “worse.” In operations, they’re often more honest about what they can do. They have clearer limits, simpler failure modes, and fewer surprises in production. That makes monitoring and incident response easier. I’ve also found that smaller models encourage better problem framing: we stop asking for a single model to do everything and start designing focused, measurable tasks.

Domain-optimized models: the practical toolkit

The edge approach works best when the model is tuned for a specific domain. The toolkit I rely on most is distillation and quantization:

Distillation: train a smaller “student” model to copy the behavior of a larger “teacher” model.

Quantization: reduce numeric precision (like FP32 to INT8) to speed up inference and lower memory use.

Targeted evaluation: test on real edge conditions (battery, CPU limits, noisy inputs), not just lab benchmarks.

The moment it clicked for me

I still remember the first time an offline model kept working during a network blip. The dashboard didn’t light up with errors, the workflow didn’t stall, and users didn’t notice anything. I relaxed—because reliability wasn’t a hope anymore, it was built into the system.

7) Open Source AI + AI Infrastructure: my pragmatic 2026 checklist

In my AI & Data Science Ops work, open source AI has become the default choice when I need faster iteration and clearer answers. I can inspect weights, training notes, and community issues, which makes debugging less of a guessing game. The surprise is governance: when we document decisions and ship repeatable pipelines, open source can be more controlled than “black box” APIs.

Open source AI: speed, transparency, better governance

I treat open source like a product, not a hobby. That means clear ownership, versioning, and a paper trail. When done right, it supports audits, reduces vendor lock-in, and helps teams align on what the model can and cannot do.

AI infrastructure is getting denser (and more hybrid)

In 2026, my deployments rarely live in one place. I plan for hybrid computing: some workloads in cloud, some on-prem for data gravity, and some on specialized accelerators for cost and latency. The ops win is designing once, then running anywhere with the same observability and access rules.

Quantum assisted: not magic, but on the roadmap

I don’t sell quantum hype. Still, it’s creeping into planning meetings. I track practical signals: logical qubits (not just raw counts), measured accuracy, and efficiency gains on narrow tasks. If it can’t beat classical baselines with clear metrics, it stays in research.

The checklist I actually use

Model cards: purpose, limits, risks, and intended users.

Data lineage: where data came from, consent, and transformations.

Eval harness: repeatable tests for quality, bias, drift, and safety.

Access control: least privilege, secrets management, and audit logs.

Kill switch: a fast rollback path and a hard stop for unsafe outputs.

My rule: if I can’t explain it, test it, and stop it, I don’t ship it.

Conclusion: Real results beat perfect predictions

I keep thinking about the outage story from the beginning. What changed wasn’t a “magic model” that suddenly knew everything. The real shift was operational: clearer ownership, faster incident response, better monitoring, and data pipelines that didn’t break in silence. In other words, the win came from how we ran AI and data science, not from chasing perfect forecasts.

My takeaway for 2026 is simple. If we want repeatable impact, we need to build AI factories—standard ways to ship, observe, and improve models and workflows. We also need to invest in AI-ready data, because messy inputs create messy outcomes no matter how advanced the algorithm looks. And we should treat generative AI as shared infrastructure, like logging or CI/CD: governed, reusable, and available across teams, not trapped in one-off demos.

On agentic AI, I’m a cautious “yes.” Agents can reduce toil and speed up ops work, but only with guardrails: strong evaluations, safe tool access, audit trails, and leadership support when the system says “stop” or “roll back.” Without that, autonomy becomes risk, not leverage.

Here’s my wild card analogy: AI is a restaurant kitchen. Great chefs (models) don’t matter if the pantry (data) is spoiled. The best teams I’ve seen focus on freshness, labeling, and supply lines before they argue about fancy recipes.

If you want to act now, run a 30-day experiment. Pick one ops metric to improve (like time-to-detect or deployment frequency), one data quality fix (like schema checks or missing-value rules), and one governance rule (like model approval or prompt logging). Measure before and after. Real results will teach you more than perfect predictions ever will.

TL;DR: AI and data science ops improved most when we treated generative AI as an organizational resource, built ‘AI factories’ (platform + methods + data + algorithms), invested in AI-ready data, and matured leadership (hello, chief data officer). Agentic AI is real but entering the trough of disillusionment; edge AI and smaller domain optimized models will quietly win on latency, cost, and sovereignty. Open source AI is speeding up governance and capability—if you operationalize it. Plan for denser hybrid computing (even quantum assisted) as AI infrastructure evolves toward 2026.

Comments

Post a Comment