Leading AI Adoption: A People-First 2026 Playbook

The first time I pitched “AI for leadership” to a skeptical VP, I made the rookie mistake of showing demos before answering the real question: “Who’s accountable when it goes weird?” That uncomfortable meeting (and the follow-up coffee that saved the project) taught me that leading AI adoption is less about shiny models and more about clear decisions, guardrails, and a culture that can handle change without panic. In this guide, I’ll walk through what I now treat as the non-negotiables of AI implementation 2026—plus a few detours I wish someone had warned me about.

1) Start with the awkward question: “Who owns the risk?” (AI governance framework)

The first question I ask before we build anything is the one that makes the room go quiet: “Who owns the risk?” Not “Who owns the model?” Not “Who owns the budget?” Risk. Because the moment AI touches customers, employees, or decisions, the risk is already real—even if the pilot is still a slide deck.

My “coffee-save” moment: governance shows up before the first pilot

I learned this the hard way in a leadership workshop. We were excited about a quick AI assistant to summarize internal notes. Someone asked if it would pull from private HR files. Another person asked who would approve the prompts. I remember holding my coffee and thinking, we’re about to ship something we can’t explain or control. That was my “coffee-save” moment: I paused the pilot and started with governance first.

In the step-by-step approach I use, governance isn’t a blocker—it’s the guardrail that lets teams move faster without guessing. If we can’t answer “who owns the risk,” we can’t scale responsibly.

Build a federated governance model (central + local ownership)

I prefer a federated governance model: a small central Responsible AI function that sets standards, plus clear line-of-business ownership where the AI is used.

- Central Responsible AI function: sets policy, templates, review gates, and minimum controls; supports audits and training.

- Line-of-business owner: owns outcomes, user impact, and day-to-day decisions; signs off on use cases and risk tradeoffs.

- Shared accountability: central team defines “how,” business teams decide “why” and “where,” and both track “what happened.”

This structure keeps AI governance close to the work, while still consistent across the company—an important part of any AI adoption playbook.

Define “safe by default” controls

I don’t rely on good intentions. I rely on defaults. These are the baseline controls I put in place before a tool reaches real users:

- Data minimization: only use the data needed for the task; avoid sensitive fields unless there’s a clear reason.

- Access control: role-based access, least privilege, and tight permissions for prompts, logs, and outputs.

- Red-teaming: test for prompt injection, data leakage, biased outputs, and unsafe advice before launch.

- Monitoring: track drift, error patterns, user complaints, and “near misses” with simple dashboards.

Create a simple escalation path (human override + explainability)

When something goes wrong, people need a clear path—not a committee. I set expectations like:

- Human override: users can stop, correct, or escalate AI outputs without friction.

- Explainability expectations: the system should show sources, confidence limits, or reasoning summaries where possible.

- Escalation owner: one named person per use case who can pause the system and trigger review.

“If we can’t explain it well enough to challenge it, we shouldn’t automate it.”

Wild-card thought: governance is a restaurant kitchen

I treat AI governance like a restaurant kitchen. You can experiment with a new dish, but cleanliness rules aren’t optional. Handwashing, temperature checks, and labeled ingredients exist so customers don’t get hurt. In AI, the equivalent is access control, testing, and monitoring—boring on purpose, and powerful because they keep innovation safe.

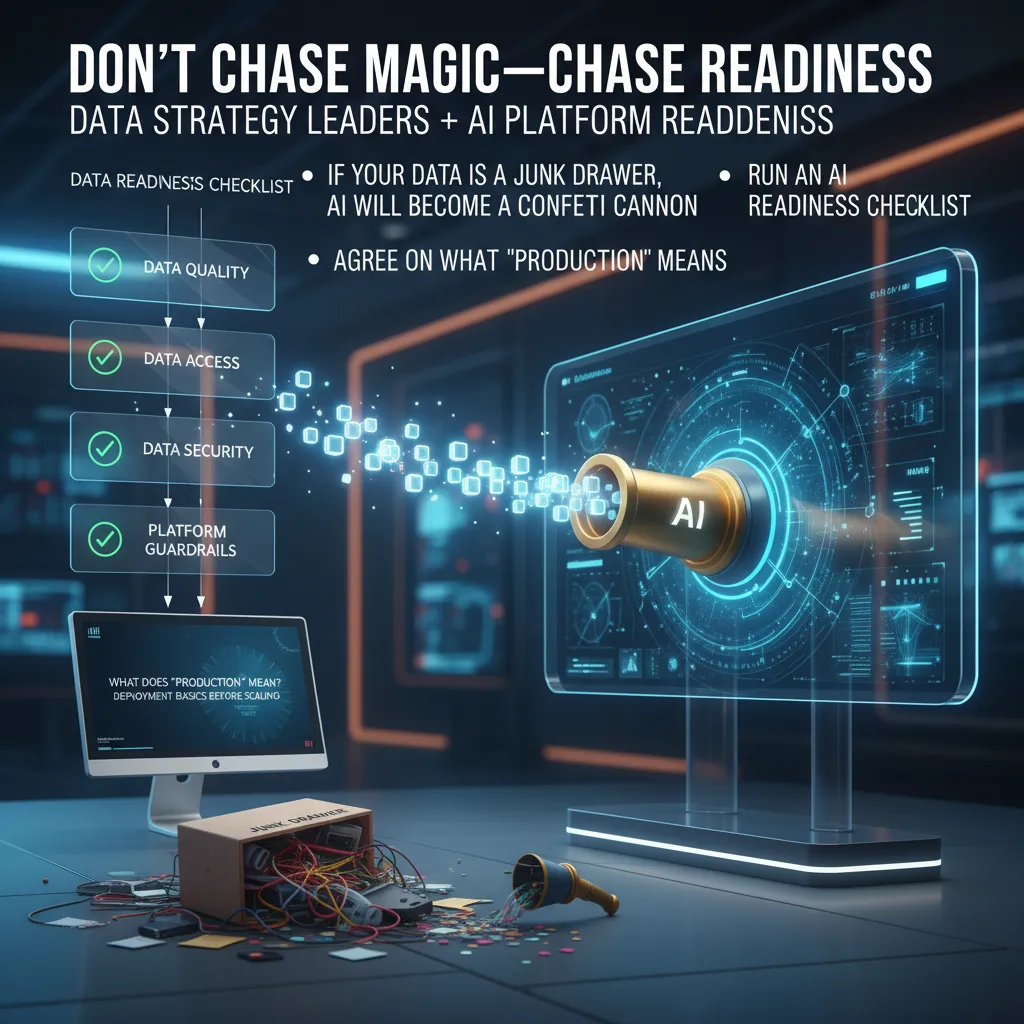

2) Don’t chase magic—chase readiness (Data strategy leaders + AI platform readiness)

In 2026, I see the same pattern repeat: leaders buy shiny AI tools and expect instant results. My rule of thumb is simple: if your data is a junk drawer, AI will become a confetti cannon. It will spray “insights” everywhere—fast, colorful, and mostly useless. Before I let any team scale AI adoption, I push for AI readiness: the boring basics that make AI reliable in real work.

Run an AI readiness checklist (before you run pilots)

When I follow a step-by-step AI implementation approach, I start with a short checklist. Not because I love process, but because it prevents avoidable failures and builds trust with security, legal, and frontline teams.

- Data quality: Do we know what’s accurate, current, and complete? Are key fields consistent across systems?

- Access: Who can see what, and why? Can we grant least-privilege access without blocking work?

- Security: Are we protecting sensitive data, prompts, and outputs? Do we have logging and incident response?

- Platform guardrails: Do we have approved models, approved connectors, and clear rules for retention and usage?

If any one of these is weak, AI doesn’t just “perform worse”—it becomes risky. And risk kills adoption faster than a bad demo.

Agree on what “production” means (before scaling)

One of the biggest AI leadership mistakes I’ve made (and corrected) is assuming everyone shares the same definition of production deployment. For some teams, “production” means a chatbot is live. For others, it means it’s monitored, audited, and supported like any other business system.

Here’s what I align on as AI production deployment basics:

- Clear ownership: a named product owner and a technical owner.

- Monitoring: quality checks, drift signals, and user feedback loops.

- Security controls: access control, data boundaries, and audit logs.

- Change management: versioning for prompts, models, and datasets.

- Support: a path for bugs, rollbacks, and user training.

“If we can’t explain how it’s monitored, we’re not in production—we’re in a demo.”

Pick one constraint to fix first (I start with access control)

AI platform readiness can feel overwhelming, so I pick one constraint and fix it end-to-end. I usually start with access control because it forces the hard conversations: what data is truly needed, who is accountable, and what “approved use” looks like. It also unlocks safer experimentation—teams can move faster when boundaries are clear.

Even a simple policy helps:

Default: deny. Grant: least privilege. Review: quarterly. Log: always.

Small tangent: data strategy leaders are part librarian, part bouncer

The best data strategy leaders I’ve met are part librarian and part bouncer. Librarian: they label, catalog, and make data easy to find. Bouncer: they decide who gets in, under what rules, and when someone needs to leave. That mix is what turns AI from “magic” into a dependable capability teams can actually use.

3) Pick high-ROI use cases (and kill the ‘cool demo’ impulse)

I’ve learned that AI adoption fails most often when we start with what looks impressive, not what moves the business. A “cool demo” can win a meeting and still lose the year. So I push for business-led AI deployment: we start where the outcome is painfully measurable—time saved, revenue gained, errors reduced, tickets closed, churn lowered.

Start where the business outcome is painfully measurable

When I choose use cases, I ask one simple question: “If this works, will finance agree it worked?” If the answer is “maybe,” I keep looking. The best early use cases are boring on the surface and powerful in the numbers—like reducing support handle time, improving invoice matching, or increasing qualified leads.

- Clear owner: one leader accountable for results

- Clear data: the inputs exist and are accessible

- Clear metric: the KPI is already tracked (or can be tracked fast)

Run 2–3 quick-win pilots you can defend to finance

I prefer a quick wins pilots approach: 2–3 small experiments instead of one giant bet. Each pilot should be narrow enough to ship in weeks, not quarters. This keeps trust high and risk low, and it gives us options if one pilot stalls.

- Pilot A: automate a repeatable internal task (ex: meeting notes + action items)

- Pilot B: improve a customer-facing workflow (ex: draft support replies with human approval)

- Pilot C: decision support for a team (ex: sales call coaching insights)

To keep pilots honest, I write a one-page “finance defense” before we build: cost, expected benefit, timeline, and what we will stop doing if it doesn’t work.

Define KPI baseline targets before the build (or you’ll argue later)

If we don’t set baselines first, we end up debating feelings instead of results. I capture the current state and set a target range. For example: “Average handle time is 9.5 minutes; target is 8.0–8.5 within 60 days.”

| KPI | Baseline | Target | Measurement |

|---|---|---|---|

| Cycle time | 10 days | 7–8 days | Weekly report |

| Error rate | 4.2% | < 3% | QA sampling |

Use “AI workers automation” carefully: teammates, not shadow operators

I like the idea of AI workers, but I design them as teammates with clear roles: they draft, summarize, classify, and recommend. They should not quietly take actions that people can’t see or explain. I make the handoffs explicit: AI proposes, human disposes for high-risk steps.

“If no one can explain what the AI did, it’s not automation—it’s hidden risk.”

Scenario: Sales gets an AI agent—what’s the one decision it must never make alone?

If I give sales an AI agent, the one decision it must never make alone is pricing and contract commitments (discounts, terms, legal promises). The agent can suggest options, pull policy, and draft language, but a human must approve anything that changes margin, liability, or customer expectations.

4) People-first leadership AI: skills, roles, and the messy middle

Why AI leadership roles are changing

In my experience, AI adoption fails when leaders try to be the hero decider—the person who makes every call, approves every model, and “owns” every outcome. AI moves too fast for that. The better pattern is leadership as a system designer: I set the guardrails, define what “good” looks like, and build feedback loops so teams can learn safely. This matches what a step-by-step approach to implementing AI in leadership pushes: start with clear goals, define responsible use, and create repeatable processes instead of one-off wins.

When I lead AI adoption, I focus on three design questions:

- Decision rights: Who can deploy, who can approve, and who can stop a rollout?

- Risk boundaries: What data is allowed, what is sensitive, and what needs human review?

- Learning loops: How do we measure impact, capture errors, and improve the workflow?

Team structure: centralized expertise, distributed execution

A practical AI operating model blends a small centralized group with strong domain ownership. I like an AI Center of Excellence (AI CoE) that enables, not controls. The CoE provides standards, tools, and coaching, while domain owners (Sales, HR, Ops, Finance) run execution where the work actually happens.

| Role | What they do |

|---|---|

| AI CoE (enablement) | Templates, vendor selection, security guidance, prompt/playbook libraries, evaluation methods |

| Domain owners | Pick use cases, redesign workflows, manage adoption, track outcomes |

| IT + Security | Access control, data policies, monitoring, incident response |

| Legal/Compliance | Risk review, regulatory alignment, customer and employee safeguards |

This structure keeps AI governance real without turning it into a bottleneck. It also supports “leading AI adoption” as a business change, not just a tech project.

Culture adoption: an AI champions network (not a dead committee)

I’ve had the best results with a lightweight AI champions program. Not a committee that never meets—more like a network with a simple rhythm:

- One champion per team (volunteer or nominated)

- 30-minute monthly sync with the AI CoE

- One shared channel for wins, failures, and reusable prompts

- Two “office hours” slots per month for drop-in help

Champions translate AI into local language: “Here’s how this helps our backlog,” not “Here’s what the model can do.”

Informal aside: the best change agents in my last rollout were two middle managers who hated the tool at first. Once they saw it reduce rework and make handoffs clearer, they became the loudest advocates—because they were trusted and they spoke honestly.

Training plan: leadership skills + a shared language

People-first AI leadership needs training that is short, practical, and tied to real workflows. I run two tracks:

- AI leadership training: use-case selection, responsible AI basics, change management, and how to review AI outputs

- Optional certification (CAITL™): a common language for execs who need alignment on terms, risk levels, and decision frameworks

I also teach one simple rule: AI output = draft, not truth. That mindset keeps quality high while teams build confidence in the messy middle of adoption.

5) A 90-day AI implementation 2026 roadmap (so you stop “planning” forever)

If you want real AI adoption in 2026, you need a short runway and clear choices. In my experience, teams don’t fail because they lack ideas—they fail because they never move from discussion to delivery. This 90-day roadmap is built to create momentum while keeping people, trust, and risk management at the center.

Day 0–14: assess and align

In the first two weeks, I focus on alignment, not tools. I start by setting governance: who can approve use cases, what data is allowed, and what “good” looks like. Then I pick one or two KPIs that matter to the business and to the team doing the work—think cycle time, customer response time, or quality checks. Finally, I name owners: an executive sponsor, a product owner, a technical lead, and a risk/privacy partner. If ownership is vague, progress will be vague too.

Day 15–45: prove value fast

Next, I run quick-win pilots that are small enough to finish and visible enough to earn trust. I give teams guardrailed access to approved AI tools and data, with clear do’s and don’ts. Every week, we hold short demos. This is important: weekly demos turn AI from a rumor into something people can see, question, and improve. I also track the KPI from day one, so we can say, “Here’s what changed,” not “It feels better.”

“Speed comes from focus: one team, one workflow, one measurable outcome.”

Day 46–75: ship and scale

By this stage, I stop treating AI like an experiment and start treating it like a product. We move the best pilot into production deployment with real users, real support, and real accountability. This is where MLOps matters: version control, access control, secure environments, and repeatable releases. I also set up monitoring for accuracy, drift, cost, and user feedback. If the model’s output changes over time, we need alerts and a clear process to pause, fix, and re-release.

Day 76–90: expand capability

In the final stretch, I build the system that makes adoption stick. I appoint AI champions in each function, not as “tool experts,” but as peer coaches who help teams apply AI safely. We create templates for prompts, evaluation checklists, and approval steps, so teams can reuse what works. Then we select a second wave of use cases based on the same rules: measurable KPI, clear owner, approved data, and a plan to ship.

As I close out the 90 days, I remind myself that speed is a leadership decision. The goal is not to move fast by skipping controls—it’s to move fast by making decisions early, setting guardrails, and learning in public. When we treat risk management as part of delivery (not a blocker), we earn trust, and trust is what turns AI from a pilot into a habit.

TL;DR: If you’re implementing AI in leadership in 2026, start with a federated AI governance framework and responsible AI principles, get data/platform readiness in order, pick high-ROI use cases for quick wins, design a people-first operating model, then scale with MLOps security and monitoring—using KPIs, training, and culture work to keep trust intact.

Comments

Post a Comment