Last week I opened my “AI news” tab intending to skim one headline. Forty minutes later I’d wandered through a MIT Sloan piece, an IBM predictions page, and Microsoft’s “trends to watch,” with a messy notebook full of arrows, question marks, and one underlined phrase: “AI bubble deflation.” That’s when it clicked for me—Data Science AI news isn’t just releases anymore; it’s a map of where budgets, teams, and real work are heading. In this post I’m stitching together the latest updates and releases into five trends for 2026, with a few detours (because the detours are where the truth usually hides).

1) My messy method for reading Data Science AI news

When I scan Data Science AI news and “latest updates and releases,” I’m not trying to read everything. I’m trying to spot what will change my work next week. My first filter is a simple signal vs. glitter checklist: does this update change how I ship models, or just how I talk about them? If it only adds new buzzwords, I bookmark it and move on.

My quick triage: four buckets

I sort each headline into one of these buckets. It keeps me from getting pulled into hype cycles.

Infrastructure moves: new GPUs, cheaper inference, better vector search, faster pipelines.

Org changes: team reshuffles, new “AI platform” groups, policy shifts, vendor lock-in risks.

Capability leaps: real jumps in accuracy, tool use, multimodal, or reliability.

Quiet research wins: small papers that make evals cleaner, training safer, or monitoring easier.

A mini-anecdote: the “Agentic AI” demo that failed fast

I once watched an Agentic AI demo that looked magical: it planned tasks, called tools, and “handled” a workflow end-to-end. I was ready to rewrite my roadmap. Then I tried a boring real-world test: a permissions edge case. The agent attempted an action it wasn’t allowed to do, didn’t recover well, and produced a confident but wrong status update. That moment reminded me that deployment details beat stage demos.

What I log in my notebook (so I can act later)

For each release, I write down a few fields so I can compare updates over time:

Release date (and version, if it exists)

Who benefits: developers, data teams, end users, or compliance

What gets cheaper: time, compute, or risk

AI news feels like weather: forecasts matter, but you still need a jacket (governance) on your commute (deployment).

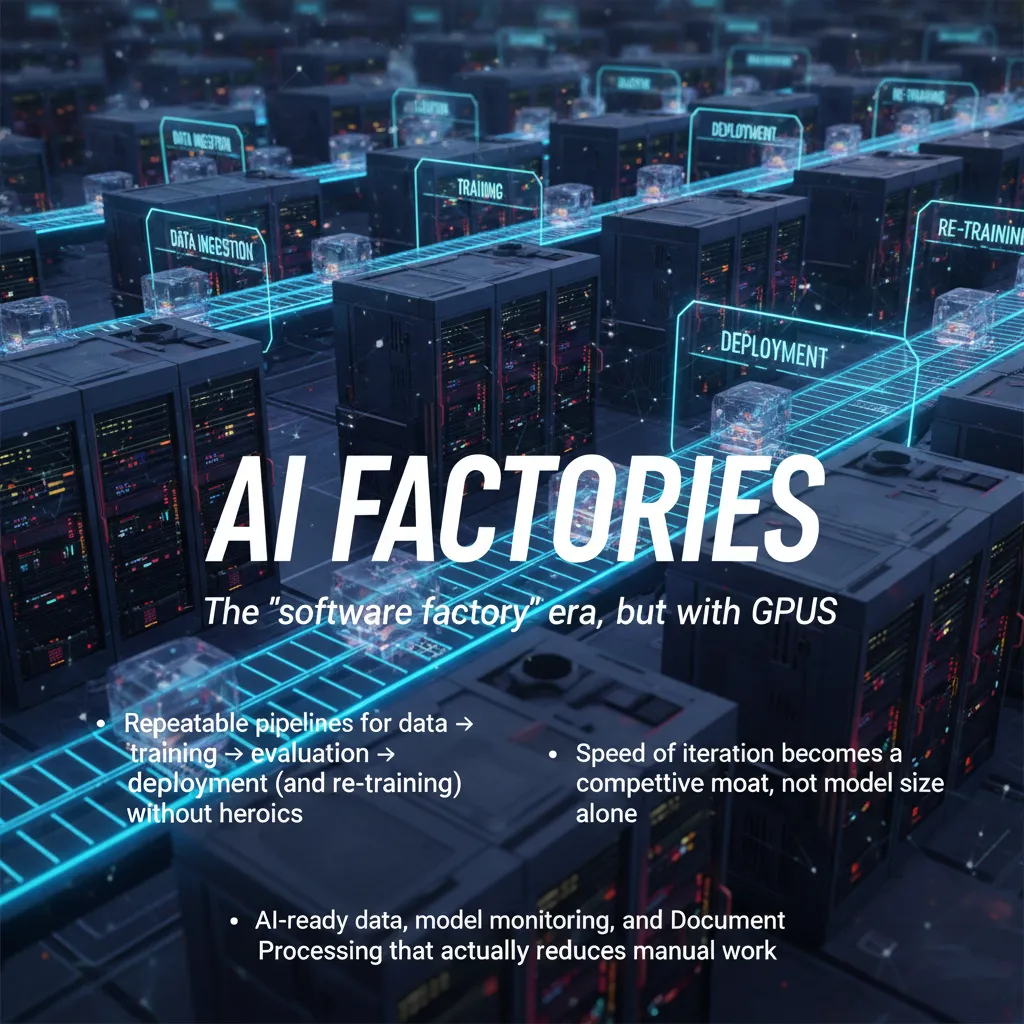

2) AI Factories: the ‘software factory’ era, but with GPUs

In the Data Science AI News updates I’m tracking, one theme keeps repeating: teams are moving from “we built a model” to “we run an AI factory.” By AI factory, I mean a repeatable pipeline that turns data → training → evaluation → deployment, and then loops back for re-training when reality changes. The key idea is no heroics: fewer late-night fixes, fewer one-off scripts, more predictable releases.

Why it’s showing up in 2026 talk

In 2026, the competitive moat is often iteration speed, not just model size. If you can ship a safe improvement every week (or every day), you learn faster than a team that ships a “big model upgrade” once a quarter. GPUs matter here, but mostly because they make the factory line move: faster experiments, faster fine-tunes, faster evaluation runs.

The boring-but-decisive work

The factory only works if the inputs and checks are solid. The “boring” parts are what decide whether AI helps or becomes a cost center:

AI-ready data: clean schemas, clear ownership, versioned datasets, and reliable labels.

Model monitoring: drift, latency, cost, and quality signals that trigger action.

Document Processing that truly reduces manual work: extracting fields, routing exceptions, and keeping humans for edge cases.

One-off project vs. factory line mindset

One-off model project | AI factory line |

|---|---|

Success = “model shipped” | Success = “model improves safely over time” |

Roles blur; one person owns everything | Clear roles: data, ML, platform, QA, ops |

KPIs: accuracy in a notebook | KPIs: time-to-iterate, incidents, ROI, adoption |

Factory thinking is subtraction, too

Small tangent: I once watched a team ship faster by deleting two dashboards and adding one alert. That’s AI factory thinking—remove noise, keep the signal, and automate the response. Sometimes the best pipeline improvement is simply fewer moving parts.

3) Agentic AI & Super Agents: useful… after we stop overpromising

In the Data Science AI News stream of updates and releases, “agentic AI” keeps showing up as the next big leap. My take: it’s overhyped in the short term, mostly because demos look smooth while real workflows are messy. But I also think that within five years, agentic systems become normal software for multi-step work—less “magic coworker,” more “reliable automation you configure.”

Where agentic AI already helps me

Drafting analysis plans: turning a vague question into steps, checks, and data needs.

Running multi-agent toolchains: one agent gathers context, another writes code, another reviews results.

Cleaning up experiment notes: summarizing runs, tagging decisions, and formatting logs for later.

Where it still faceplants

The gap between “can call tools” and “can do work” is still big. The failures I see most:

Permissions: agents can’t access the right folders, APIs, or environments, so they stall or improvise.

Flaky tools: one timeout or version mismatch and the whole chain breaks.

Confident wrong actions: the classic “it did the wrong thing confidently” problem, but now with side effects.

Super Agents: promising, if guardrails are real

When people say “Super Agents,” I hear: strong reasoning + multi-tool orchestration across data, code, docs, and dashboards. That can be useful for complex tasks, but only if guardrails are real: scoped permissions, audit logs, human approval gates, and clear rollback paths.

“Agents will feel powerful when they can safely say: I’m not sure—please confirm.”

Hypothetical scenario I actually want

Imagine an agent that handles a month-end close: pulls exports, reconciles totals, flags anomalies, and drafts the report. It runs smoothly—until it hits one weird CSV with shifted columns. Instead of guessing, it pauses and asks a human a simple question, then continues. That’s the version of agentic AI I’m tracking.

4) Smaller Models, Mixture of Experts, and the quiet power of ‘good enough’

In the Data Science AI News: Latest Updates and Releases stream I’m tracking, one pattern keeps repeating: teams are shipping with smaller models more often than you’d expect. Not because they don’t like big models, but because smaller ones are cheaper, faster, and easier to run in real products—especially on Edge AI, mobile, or any constrained environment where memory, battery, and network are limited.

Why smaller models keep popping up

When I look at real deployments, “good enough” accuracy plus predictable performance wins. Smaller models reduce cold-start time, lower GPU needs, and make it simpler to scale without surprise costs. They also make it easier to test, monitor, and roll back changes.

Mixture of Experts (MoE), coworker-style

I explain Mixture of Experts like this: instead of waking the whole brain for every question, you route each request to the right specialist. A router picks a few expert sub-models, and only those run. That means you can get strong quality without paying the full compute cost every time.

“Don’t run the entire model for every prompt—send it to the expert that fits.”

Domain models: “knows our documents” beats “knows everything”

Another trend I’m seeing is a shift toward domain models and tight retrieval pipelines. Many teams would rather have a model that understands their policies, tickets, and product docs than a general model trained on the whole internet. In practice, this often improves trust, reduces hallucinations, and makes evaluation clearer.

Open Source AI is speeding up decisions

The pace of open source AI—including multimodal reasoning models—makes it harder to justify waiting for a single vendor roadmap. If a new open model supports vision + text today, teams can prototype now, then decide later whether to host, fine-tune, or buy managed inference.

My “best model” confession

I used to equate best model with best outcome—then the latency bills arrived. Once you price in response time, throughput, and reliability, “good enough” can be the most professional choice.

Smaller models: lower cost, faster UX, edge-friendly

MoE: route to specialists, save compute

Domain focus: better answers on your data

Open source: faster iteration than vendor-only plans

5) Spatial AI, Physical AI, and the ‘real world’ comeback tour

Spatial AI is the trend I didn’t expect to care about—until I saw how it changes the way stores, warehouses, and campuses can sense behavior. In the latest data science AI news cycle, I keep noticing updates that move beyond chat and text, and into systems that understand where things happen: foot traffic patterns, shelf interactions, queue flow, and even how people navigate tight spaces.

From “bigger models” to “models that move”

What I’m tracking in 2026 is a clear priority shift: not just scaling LLMs, but building Physical AI and Robotics AI that can see, plan, and act. Spatial reasoning, computer vision, and sensor fusion are becoming the practical layer that connects AI to the real world. It’s less about perfect answers and more about safe, useful behavior.

A concrete use case: fewer out-of-stocks (and less awkward aisle dance)

One example that feels very “real world”: combining shelf sensors, cameras, and lightweight models to reduce out-of-stock events. Instead of waiting for a manual check, the system can flag low inventory, detect misplaced items, and predict when a shelf will go empty.

Sensors detect stock levels or product movement

Vision models confirm what’s actually on the shelf

Forecasting predicts replenishment timing

Task routing sends the right alert to the right worker

The side benefit is small but real: fewer shoppers hovering in the same spot doing that awkward aisle dance while everyone searches for the last item.

The risk I worry about: surveillance creep

My main concern is surveillance creep. Just because we can measure everything doesn’t mean we should. Spatial analytics can easily slide from “optimize operations” into “track people.” I look for privacy-by-design signals: on-device processing, short retention windows, and clear rules about what is not collected.

Small aside: the first time I saw a robot fail gracefully, I trusted it more than the “perfect” demo.

6) Hybrid Computing & Quantum AI: the part I’m curious about (and cautious with)

From what I’m seeing in Data Science AI News updates, the most serious work is moving toward hybrid computing: supercomputing + AI, and (eventually) quantum computing for specific simulation tasks. I don’t think this is hype in the same way some “AI magic” headlines are. It’s more like a practical stack: use the right tool for each part of the job, then connect the outputs into one research workflow.

Where hybrid computing looks real to me

In labs doing climate, physics, and engineering work, simulations are already expensive. Adding AI can speed up parts of the pipeline—like surrogate models that approximate slow calculations. Over time, quantum may plug into narrow steps where it can help, but I’m not betting on a full “quantum replaces everything” story.

Supercomputing for large-scale simulation and data generation

AI models for faster approximations, pattern finding, and uncertainty estimates

Quantum (later) for targeted problems that can be benchmarked

Quantum AI: easy to oversell, so I look for benchmarks

Quantum AI is the part I’m most cautious with. It’s easy to claim breakthroughs without clear comparisons. I pay attention when the win is narrow and measurable, like:

Optimization problems with clear baselines

Chemistry simulations where accuracy can be tested

Materials discovery with repeatable evaluation metrics

My filter: if it can’t be benchmarked, it’s hard to trust.

Why this matters to Data Science

Better simulations can create better training data, especially when real-world data is limited, costly, or slow to collect. That can improve model robustness and help us test ideas before we run real experiments.

Hypothesis generation (not just paper summaries)

I’m also tracking tools where AI helps researchers design experiments: suggesting variables to test, proposing candidate molecules, or ranking which trials are most informative. That’s a step beyond “summarize this PDF.”

My caution: compute can’t fix a shaky pipeline

If your data lineage, labeling, or validation is weak, fancy compute just produces wrong answers faster. I still treat data quality + evaluation as the foundation, even in the most advanced hybrid computing setups.

7) Conclusion: the AI bubble deflation I’m oddly rooting for

If the AI bubble deflation really hits in 2026, I’m not sure I’ll panic. I think I’ll feel relief. A cooler market tends to force clearer thinking: less hype, fewer “look what we can demo” moments, and more pressure to prove value in real Data Science work. In the latest Data Science AI News cycle of updates and releases, I keep seeing the same pattern—big promises, fast launches, and a lot of attention. A reset could push us toward value realization instead of vanity metrics.

My personal rule for 2026 is simple: if a release doesn’t make Data Science workflows measurably calmer, it’s not “news,” it’s noise. Calmer means fewer broken pipelines, fewer surprise costs, fewer handoffs, fewer late-night alerts, and less time spent translating between tools. It also means faster iteration with the same or better quality. If I can’t point to a real reduction in friction, I try not to let the announcement take up space in my head.

That’s why I’m tracking a handful of trends that feel grounded. AI Factories matter because repeatability is what turns experiments into products. Agentic AI matters when it improves workflows, not when it just adds another layer of automation to babysit. Smaller models matter because efficiency is often the difference between “cool” and “shippable.” Open source AI matters because velocity and transparency help teams move without waiting for permission. Spatial/physical AI matters because it makes model behavior more visible in the real world. And hybrid computing matters because serious science and serious scale rarely live in one place.

Final note to self: keep one foot in research, one foot in operations—because that’s where real leverage lives.

If you want a simple habit for 2026, try this: pick one trend, run a 30-day experiment, and write down what broke. What breaks is not failure. It’s your roadmap.

TL;DR: AI Trends 2026 looks less like bigger models and more like better systems: AI factories, agentic AI that’s useful (not magical), smaller domain models, open-source acceleration, and AI infrastructure tuned for value realization—plus a sober look at bubble deflation.

Comments

Post a Comment