Five Data Science Trends 2025–2026 (AI Bubble, Agentic AI)

Last winter I watched a team celebrate a “finished” model… only to spend the next six weeks arguing about data definitions and who owned the pipeline. That little fiasco is why I’m obsessed with Data Science Trends 2025–2026: the flashy demos (hello, Generative AI) are real, but the winners will be the people who turn hype into boring, repeatable systems. In this outline, I’m putting the loud trends next to the quiet ones—because in 2026, quiet usually pays the bills.

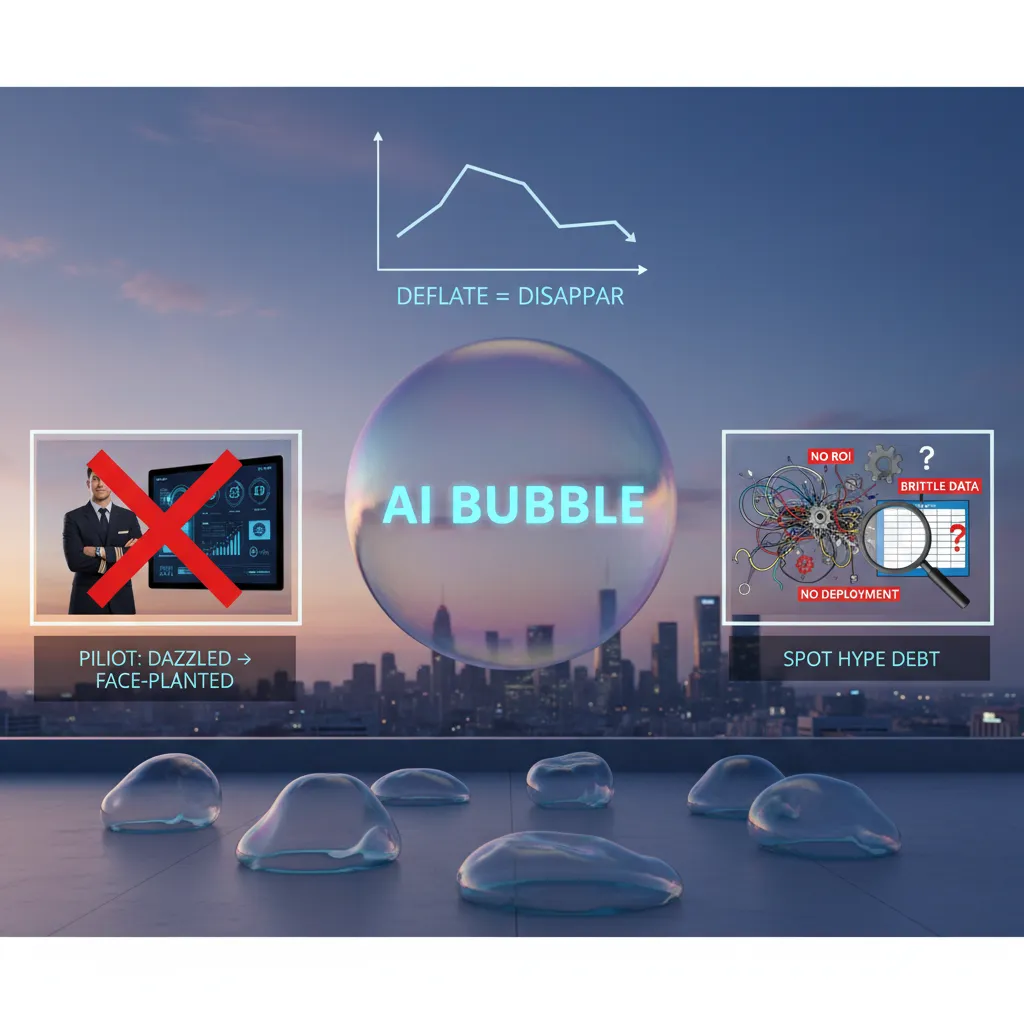

1) AI Bubble (and why I’m oddly relieved)

Here’s my quick gut-check for 2025–2026: the AI Bubble is real. Budgets got ahead of basics, and some teams promised “magic” instead of measurable value. But when a bubble deflates, it doesn’t mean AI will disappear. It usually means the market stops rewarding vague demos and starts rewarding systems that work in production—securely, legally, and at a cost that makes sense.

The pilot that wowed leadership… then fell apart

I’ve lived this. We ran a pilot that looked incredible in a leadership review: fast answers, slick UI, and a few “wow” moments. Then reality hit. Governance questions showed up late (data access, retention, audit logs). Costs climbed once we moved from a small test to real usage. And the model’s outputs were hard to explain when stakeholders asked, “Why did it say that?” The pilot didn’t fail because the tech was bad. It failed because we treated deployment like an afterthought.

How I spot “hype debt” in AI projects

In the source material’s spirit—what’s changing is not just models, but the discipline around them—I now look for early signs of hype debt: the gap between what a project claims and what it can actually deliver.

- Unclear ROI: “It’s strategic” is not a metric. I want a target like reduced handle time, fewer defects, or faster cycle time.

- Brittle data: If the data is messy, missing, or locked in silos, the model will look smart in a demo and dumb in real life.

- No deployment path: If nobody can explain monitoring, rollback, access control, and ownership, it’s not a product—just a prototype.

My practical move for 2025: a “minimum credible AI” scorecard

Before scaling, I’m pushing a simple scorecard. Nothing fancy—just enough to force clarity.

- Use case: one sentence, one user, one decision.

- Value: expected impact and how we’ll measure it.

- Data readiness: sources, quality, and permissions.

- Risk & governance: privacy, compliance, and audit needs.

- Ops plan: monitoring, cost limits, and human fallback.

AI is like sourdough starter: amazing, but only if you keep feeding it the right inputs—clean data, clear goals, and steady care.

2) AI Factories: the unsexy revolution in AI Data

When I say “AI Factories”, I’m not talking about a single tool. I mean an assembly line for AI work: data comes in, gets cleaned and labeled, turns into features, feeds training runs, gets evaluated, and then ships to production with monitoring. It’s the opposite of “one brilliant model built by one brilliant person.” It’s repeatable, boring, and fast.

What I mean by “AI Factories”

In practice, an AI Factory is a set of connected pipelines and standards that make model development feel like manufacturing. The goal is simple: reduce cycle time from idea → experiment → deployment, without breaking quality.

- Data pipelines that refresh on a schedule (or near real time)

- Feature pipelines that are shared across teams, not rebuilt per project

- Training pipelines that can run on demand with tracked configs

- Evaluation harnesses that test models the same way every time

- Deployment pipelines with rollback, monitoring, and alerts

Why AI Factories show up now (faster, fewer heroics)

Leaders are pushing for faster delivery, but they also want fewer late-night emergencies. That’s why AI Factories are showing up in 2025–2026: they turn AI from “research projects” into product operations. If your company is experimenting with agentic AI, this matters even more—agents create more runs, more prompts, more data drift, and more ways to fail quietly.

Data infrastructure becomes a growth lever

I used to think data infrastructure was a back-office concern. Now I see it as a growth lever. When your data is reliable and your pipelines are repeatable, you can ship improvements weekly instead of quarterly. You can also compare models fairly, because the inputs and tests are consistent.

My opinionated checklist

- Scalable data: clear ownership, SLAs, and automated quality checks

- Versioning: datasets, features, prompts, and model configs tracked like code

- Eval harnesses: offline tests + online metrics; regression tests for “model updates”

- Budget visibility: cost per training run, per evaluation, and per 1,000 predictions

Mini-tangent: I used to overrate model choice; now I overrate repeatable pipelines.

In the “AI bubble” moments, it’s tempting to chase the newest model. But in real teams, the winners are the ones who can rebuild, retest, and redeploy safely—again and again.

3) Agentic AI: overhyped, yes—still worth designing for

When I say agentic AI to a coworker, I keep it simple: it’s AI that can take actions, not just generate text. A chatbot answers questions. An agent can open a ticket, run a query, update a dashboard, or kick off a pipeline—usually by calling tools and APIs. That “do things” part is why it’s exciting in data science trends 2025–2026, and also why it’s getting overhyped.

Why it feels overhyped right now

A lot of demos look magical because the hard parts are hidden: permissions, edge cases, and what happens when the agent is wrong. In real teams, agents can be brittle. They may misunderstand context, pick the wrong tool, or act confidently with incomplete data. The risk isn’t just bad text—it’s a bad action.

Where it actually helps is narrower and more practical: repetitive workflows with clear rules, good logs, and safe rollback. Think: incident triage, data catalog updates, basic QA checks, or drafting a runbook step-by-step for a human to follow.

A guarded workflow pattern I trust

I design for agents the same way I design for production data systems: assume failure, add controls, and log everything. The pattern I like is:

- Agent proposes (plan + evidence + expected impact)

- Human approves (or edits the plan)

- System executes (with least-privilege permissions)

- Logs everything (inputs, actions, outputs, timestamps)

I often require the agent to show its plan in a structured way, like:

{"goal":"fix freshness alert","actions":["check upstream job","sample rows","open incident"],"risk":"medium"}

Hypothetical: 2 a.m. data quality triage

Imagine it’s 2 a.m. and a freshness alert fires for a key table. Instead of waking me up, an agent:

- Checks the last successful pipeline run and upstream dependencies

- Runs a small set of safe validation queries

- Collects evidence (logs, row counts, anomaly scores)

- Drafts a ticket with likely root cause and suggested next steps

If the fix is low-risk (like rerunning a failed job), it can propose the rerun for approval, not just do it.

What I’d measure

- Error rate: how often the agent’s diagnosis or action is wrong

- Time-to-resolution: from alert to stable data again

- “Oops” moments caught by approvals: near-misses prevented by the human gate

4) Vector Databases + AI-Integrated tools: the stack is quietly changing

In 2025–2026, I’m seeing vector databases move from “nice-to-have” to everyday data science infrastructure. Not because they’re trendy, but because so many common tasks now start with search and retrieval. When we embed text, images, or user behavior into vectors, we can do similarity search that feels natural for modern products: semantic search in docs, “people also viewed” recommendations, support ticket routing, and RAG (retrieval-augmented generation) for internal copilots.

Why vector databases keep showing up in real projects

Classic databases are great when you know the exact key or filter. Vector databases shine when you want “things like this.” That’s why they show up in:

- Search: semantic search over knowledge bases, policies, and product catalogs

- Retrieval: fetching the most relevant chunks for LLM prompts (RAG)

- Recommendations: matching users to items based on similarity, not just rules

Readers keep asking me about Pinecone because it’s a recognizable example with a clear “store embeddings + query by similarity” story. Whether you use Pinecone or another option, the pattern is the same: embeddings in, nearest neighbors out, with metadata filters to keep results grounded.

Small confession: I used to treat the database choice as “ops stuff.” That was naive.

Once you build AI features, the database becomes part of the model behavior. Indexing strategy, chunking, metadata design, and freshness all change what users see. That’s not just ops—it’s product quality.

AI-integrated workflows are replacing parts of the old toolchain

I still love pandas—this is not a breakup. But I’m using it differently. More workflows now start with an AI layer that can:

- generate SQL, validate joins, and explain metrics

- auto-document datasets and lineage

- suggest features, segments, and anomaly checks

Instead of doing everything in one notebook, I’m seeing teams move toward collaborative analytics: fewer notebooks-in-silos, more shared semantic layers and agreed metrics. The goal is simple: when two people ask “What is revenue?” they should get the same definition, not two competing notebook cells.

In practice, the “quiet stack change” is this: vectors + retrieval + shared metrics are becoming the default foundation for data science work that touches AI.

5) Low Code, Citizen Data, and the awkward new team dynamics

In the 2025–2026 data science trends I’m watching, low-code analytics and ML tools are not “dumbing things down.” They’re shifting the bottleneck. The hard part is less about writing code and more about governance, shared definitions, and who gets to publish “official” numbers.

Low code moves the bottleneck to definitions and governance

When anyone can drag-and-drop a model or dashboard, the real risk becomes inconsistency: different teams using different filters, time windows, or customer definitions. The tool is fast; the organization is not. I see this as a healthy pressure: it forces teams to agree on metrics, ownership, and data lineage.

The finding I can’t ignore: citizen data scientists up 200%

One stat from the source material stuck with me: citizen data scientists are up by 200% with low-code platforms. That growth is not a side story—it changes team dynamics. Analysts, ops leads, and marketers can now build assets that look “production-ready,” even when the logic is fragile.

How I’d prevent chaos: templates, guardrails, and a data product mindset

If low code is the new normal, I’d treat internal dashboards and models like data products: versioned, documented, and owned. Practically, I’d put a few guardrails in place:

- Metric templates (standard definitions for revenue, churn, CAC, activation)

- Certified datasets with clear owners and refresh rules

- Permission tiers (explore vs. publish)

- Lineage requirements: every dashboard must show sources and transformations

- Review checkpoints for anything used in finance, forecasting, or exec reporting

A real-world-ish vignette: the dashboard dispute

I’ve seen a familiar pattern: marketing ships a sleek dashboard in a low-code BI tool showing “pipeline influenced.” Finance pushes back because the number doesn’t match booked revenue. Then we discover the dashboard used a different attribution window and excluded refunds. Suddenly everyone cares about lineage and definitions.

Low code makes it easy to build. It also makes it easy to publish the wrong truth.

Where Data Science stays essential

Even with low-code platforms, data science remains critical for:

- Experiments (power, randomization, and interpretation)

- Causal thinking (what caused the change vs. what correlated)

- Model evaluation discipline (leakage checks, baselines, drift monitoring)

6) Chief Data leadership + Real-time Personalisation: where org charts meet revenue

Why Chief Data Officer success surprises me (in a good way)

In the source material on Data Science Trends 2025–2026, the improving success rate of the Chief Data Officer (CDO) stands out to me. For years, I saw CDO roles fail because they were “advice-only” jobs with no budget, no authority, and no clear tie to outcomes. What’s changing is that more companies now treat data as a product and a platform, not just reporting. Going into 2026, I expect org design to reflect that: the CDO (or equivalent) needs ownership of data standards, shared tooling, and the operating model that makes AI and analytics repeatable across teams.

Real-time Personalisation is a systems problem, not a campaign

Real-time personalisation is often sold like a marketing feature, but I see it as an end-to-end systems challenge. If data is stale, the “personalised” experience becomes wrong fast. If identity is messy, you personalise to the wrong person. If consent is unclear, you create risk. So I think the real work is in data freshness (streaming and low-latency pipelines), identity resolution (linking devices, accounts, and sessions), and consent (capturing preferences and enforcing them everywhere). This is where leadership matters: someone has to make these rules consistent across product, marketing, and engineering.

Real-time insights and the “40% more revenue” claim

The source mentions a claim that real-time insights can drive up to 40% more revenue. I don’t take that number as universal, but I do take it seriously enough to validate internally. If I were checking this in my own company, I’d look for clean experiments: holdout groups, clear attribution windows, and a split between short-term lift (conversion) and long-term value (retention, churn, margin). I’d also verify costs, because real-time systems can be expensive if you scale them without guardrails.

Data monetisation: turning datasets into products with SLAs

Another shift I’m watching is data monetisation as a real business model. The difference between “sharing data” and “selling a data product” is discipline: defined schemas, documentation, access controls, and SLAs for uptime, latency, and quality. When datasets become products, teams stop treating data like a side effect and start treating it like revenue infrastructure.

My closing view for 2025–2026

My conclusion is simple: strategy is just choosing what to standardize. In 2026, the winners will be the teams that standardize identity, consent, and data quality—so personalisation, agentic AI, and monetised data products can scale without breaking trust or budgets.

TL;DR: My 2025–2026 take: the AI bubble cools, AI factories industrialize model building, agentic AI moves from demos to guarded workflows, vector databases and AI-integrated tools reshape the stack, low-code expands citizen data work, and leadership/infrastructure choices decide who actually ships.

Comments

Post a Comment