Finance Teams’ Playbook for AI Finance Tools

Last quarter, I watched a perfectly good forecast get derailed by something embarrassingly small: a copy‑pasted Excel column that shifted one row. We didn’t “need more effort”—we needed fewer fragile handoffs. That’s the moment I stopped treating AI in finance like a shiny add‑on and started treating it like strategy: pick the right workflows, wire the data, demand audit trails, and measure time savings like you mean it. This guide is the playbook I wish I’d had before my first AI pilot—equal parts practical, skeptical, and quietly optimistic.

1) The “Why Now” for AI Tools Finance (and my one rule)

Finance teams are adopting AI finance tools now because the pressure is real: faster closes, tighter cash, more audits, and less tolerance for manual rework. In The Complete Finance AI Strategy Guide, the message is clear—AI works best when it is applied to a stable process, not used as a bandage for messy operations.

My one rule: don’t automate chaos—stabilize the workflow first, then apply machine learning.

If your inputs are inconsistent, your chart of accounts is drifting, or approvals happen in Slack “sometimes,” AI will only help you make mistakes faster. I start by locking the basics: clean data, clear owners, and a repeatable monthly rhythm.

Where AI finance tools actually win

- Forecasting: better drivers, faster scenario updates, fewer spreadsheet versions

- Close: anomaly flags, auto-recs, and task routing to reduce bottlenecks

- Receivables: collections prioritization, dispute triage, and cash application support

- Underwriting: document extraction and consistency checks across packages

- Compliance checks: policy testing, audit trails, and exception reporting

Why finance is “allergic” to black boxes (and why that’s healthy)

Finance has to explain numbers. If a model can’t show why it made a recommendation, it creates risk: audit risk, regulatory risk, and leadership trust risk. I treat explainability as a requirement, not a nice-to-have.

A simple maturity ladder

- Spreadsheet heroics (fast, fragile, person-dependent)

- Workflow automation (standard steps, controls, handoffs)

- Continuous accounting (always-on reconciliations and near-real-time reporting)

My wild-card analogy: AI is a sous-chef, not the restaurant. It still needs a menu (definitions), prep (clean data), and hygiene (controls and monitoring).

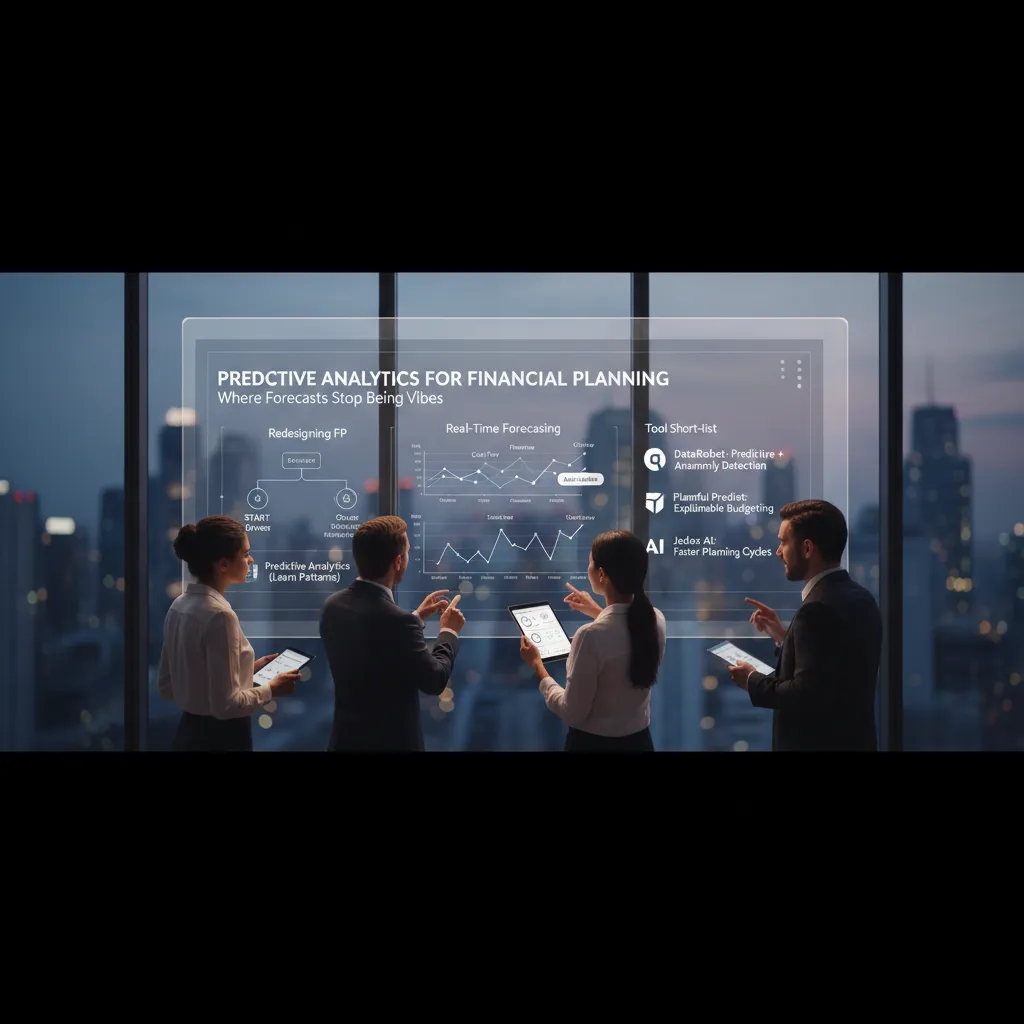

2) Predictive Analytics for Financial Planning: where forecasts stop being vibes

How I’d redesign planning: drivers first, models second

When I follow The Complete Finance AI Strategy Guide, the big shift is simple: I stop “forecasting by feel” and start with clear business drivers. Price, volume, pipeline stages, renewal dates, payment terms, headcount, and seasonality come first. Then I let predictive analytics learn patterns from history. I don’t ask AI to invent a new business model; I ask it to measure what already moves the business.

Real-time forecasting that doesn’t break the model

In practice, AI finance tools help me keep forecasts live without rebuilding spreadsheets every week. I focus on four streams:

- Cash flow forecasting: collections timing, vendor runs, and working capital swings.

- Revenue forecasting: pipeline conversion, deal cycles, and product mix.

- Churn prediction: renewal risk signals and usage trends tied to ARR.

- Scenario planning: “what if” changes that update assumptions while keeping the same driver logic.

Tool short-list (and what I’d use each for)

- DataRobot: predictive analytics + anomaly detection for revenue, cash, and outlier alerts.

- Planful Predict: explainable budgeting and planning outputs I can defend in reviews.

- Jedox AI: faster planning cycles and driver-based models that business partners can edit.

Key features I won’t compromise on

- Explainable outputs (drivers, sensitivity, and “why” behind the number).

- Versioning (who changed what, when, and which dataset trained it).

- Human handoff (a clear step where Finance approves, adjusts, and documents judgment).

The first time a model contradicted my gut, I didn’t argue—I tested.

It flagged a cash dip I “knew” wouldn’t happen. We traced it to slower collections in one segment, validated it against AR aging, and updated terms assumptions. The model wasn’t magic; it was a better mirror.

3) Financial Close gets weirdly calm with AI Reconciliation + Anomaly Detection

In The Complete Finance AI Strategy Guide, the close is framed as a repeatable system, not a monthly emergency. I’ve found the fastest way to get there is using AI reconciliation plus anomaly detection—two tools that quietly remove the “last-day scramble.”

Where AI reconciliation fits (and why it stops the scramble)

I use AI reconciliation to match high-volume items across bank feeds, subledgers, and the ERP. Instead of hunting line by line, the model proposes matches, groups near-matches, and flags exceptions that actually need my judgment.

- Matching transactions: invoices, payments, deposits, intercompany

- Flagging exceptions: missing references, partial payments, odd splits

- Faster close: fewer “mystery items” left for day 5

Anomaly detection: my favorite quiet hero

Anomaly detection is the early-warning system I wish I had years ago. It catches issues before they become audit pain: duplicates, odd vendors, and timing glitches that create fake variances.

- Duplicate invoices or repeated reimbursements

- New or unusual vendors (or vendor/bank detail changes)

- Posting timing issues (cutoff errors, late accruals)

Microsoft Copilot + Excel: automation without ripping out spreadsheets

I don’t need to replace Excel overnight. With Microsoft Copilot, I can automate data entry cleanups, generate variance commentary drafts, and standardize close notes.

“Draft the variance explanation for Marketing spend vs budget using this table.”

StackAI: no-code customization + integrations that feel normal

StackAI-style workflows let me build no-code checks and approvals that connect to Excel and Salesforce, so the close doesn’t turn into a science fair.

Key features checklist

- ERP integration (GL, AP/AR, bank feeds)

- Auditability & compliance (controls, role-based access)

- Traceable trail of matches, exceptions, and approvals I can hand to auditors

4) Credit Risk + Loan Origination: the part where regulators enter the chat

Credit risk modeling basics (plain English)

At its core, credit risk modeling is me estimating one thing: how likely a borrower is to miss payments, and what that loss could cost. Traditional underwriting leans on a few inputs (credit score, income, debt-to-income) and fixed rules. When machine learning joins underwriting, the big change is scale: the model can weigh many signals at once and spot patterns humans miss. The trade-off is that decisions can become harder to explain, which is exactly where regulators focus.

Zest AI: automation with bias controls

From the Complete Finance AI Strategy Guide mindset, I treat tools like Zest AI as a way to automate underwriting while actively testing for fair lending risk. The value isn’t just speed; it’s the ability to monitor outcomes, reduce bias, and support regulatory compliance with structured model documentation and repeatable validation.

Upstart: beyond traditional credit scores

Upstart pushes loan origination past classic credit scores by using additional data to assess “thin-file” borrowers. That can increase approvals, but it also changes portfolio risk: I may see different default patterns, faster shifts in performance, and a stronger need for ongoing monitoring (drift, vintage analysis, and policy overrides).

My governance stance

- Explainability isn’t optional; I require reason codes and clear feature impact summaries.

- I build audit trails from day one: data sources, model version, approvals, and overrides.

- I document fairness tests and thresholds before launch, not after a complaint.

Borderline applicant: what I’d document

Say an applicant sits near the cutoff. A rules model might decline due to a low score. An ML model might approve because stable cash flow and low utilization predict lower risk. I’d document:

- Decision + top drivers (reason codes)

- Model version + training window

- Fair lending checks for the segment

- Any human override and why

5) Spend Management + Autonomous Receivables: cash flow’s underrated power-ups

Spend management isn’t glamorous, but it’s where I’ve seen the fastest time savings with AI finance tools. When I set up spend auditing rules plus anomaly detection, the system catches duplicate invoices, odd price jumps, and out-of-policy purchases before they hit the close. That means fewer fire drills, fewer emails, and cleaner accruals.

Spend controls that actually move the needle

I look for tools that turn daily transactions into continuous accounting signals. Instead of waiting for month-end, I want alerts in-week: “this vendor is trending 30% above baseline” or “this category is mis-coded.” Those small flags protect cash flow and reduce rework.

Autonomous receivables: the “where did the cash go?” toolkit

On the receivables side, platforms like HighRadius are built for order-to-cash (O2C) automation: cash application, collections workflows, dispute tracking, and AI-driven forecasting. I’ve found the real win is treasury optimization—knowing what will land, when it will land, and what is at risk—so I’m not guessing during liquidity planning.

Treasury tie-in: visibility beats heroics

Better visibility beats heroic end-of-month borrowing decisions. When receivables forecasts and spend signals are connected, I can plan funding earlier, reduce idle cash, and avoid last-minute credit line pulls.

Reality check: fix the plumbing first

Bad vendor data will humble any model. Before I trust predictions, I tighten the basics: vendor master hygiene, clean categories, and PO discipline.

- Vendor normalization (one vendor, one name, one payment profile)

- Category rules that prevent “misc” from becoming a dumping ground

- PO compliance so approvals and match rates stay high

Key features I demand

- Workflow automation for approvals, exceptions, and collections plays

- Integrations that don’t require a 9-month IT project (ERP, bank, CRM)

- Audit-ready logs so every AI suggestion is traceable

6) Investment Strategy & Portfolio Management: don’t let the model drive the car alone

In the The Complete Finance AI Strategy Guide, the message is clear: AI can improve speed and coverage, but it cannot replace accountability. Tools like Kavout can help with stock ranking, backtesting investment strategies, and real-time market alerts. I treat that as a strong assistant—not the decision-maker. Guardrails come first.

How I use AI in investment strategy: signals + constraints + thesis

My workflow starts with signals (what the model thinks matters), then constraints (what we will not do), and finally a written thesis that can survive volatility. If I can’t explain the thesis in plain language, I don’t want it in the portfolio.

- Signals: rankings, factor scores, anomaly flags, sentiment shifts.

- Constraints: position limits, sector caps, liquidity rules, drawdown limits.

- Thesis: why this should work, when it should fail, and what we monitor.

Backtesting basics (and why I don’t trust “perfect” charts)

Backtests are useful, but I treat results as a hypothesis, not proof. I watch for:

- Overfitting: too many tweaks that only fit the past.

- Survivorship bias: testing only today’s winners and ignoring delisted names.

- Hidden costs: slippage, fees, and real trading constraints.

To me, an AI signal is like a weather forecast—actionable, but not a promise.

What I’d show a skeptical CFO

I bring a simple package: a one-page model card, risk limits, and a monitoring plan.

- Model card: data sources, objective, key features, known failure modes.

- Risk limits: max exposure, stop rules, concentration thresholds.

- Monitoring: drift checks, alert review cadence, escalation owner.

7) Picking Top Financial AI: my messy scoring rubric (and what I ignore)

When I pick an AI finance tool, I don’t start with demos. I start with a simple scoring rubric from The Complete Finance AI Strategy Guide: does it reduce risk and save time without breaking my controls?

My practical rubric (what I actually score)

- Key features: does it solve a real finance workflow (close, FP&A, O2C, credit, AML) end to end?

- Auditability & compliance: can I trace inputs, prompts, outputs, and approvals?

- Integrations: ERP, data warehouse, BI, ticketing, and identity (SSO) matter more than “cool AI.”

- Total cost of ownership: licenses + implementation + data work + ongoing admin.

- Time savings: I ask for a measurable before/after (hours per month, cycle time, error rate).

Quick comparisons I use to shortlist

| Tool | Where it fits | Why I care |

|---|---|---|

| StackAI | No-code workflows | Fast builds + integrations |

| Planful / Jedox | FP&A | Planning + reporting structure |

| DataRobot | Predictive analytics | Model ops + monitoring |

| HighRadius | O2C / treasury | Collections, cash, automation |

| Zest AI / Upstart | Credit / origination | Decisioning + risk signals |

| IBM watsonx | Compliance docs | Governance-friendly tooling |

| SymphonyAI | AML / financial crime | Detection + case workflows |

AI agents: where I use them (and where I don’t)

I like agents for ticket triage, variance narratives, and routing tasks. I keep humans in the loop for approvals, policy exceptions, and anything that hits the general ledger.

No-code vs bespoke builds

No-code wins when speed matters and the process is stable. Bespoke builds bite back when requirements change weekly or when audit trails are an afterthought.

My red flags list

- Vendor won’t discuss data lineage

- Can’t export audit trails

- Hides model limitations (accuracy, drift, failure modes)

8) Rollout plan: from pilot to ‘we trust it’ (without a revolt)

A simple 30-60-90 day rollout

When I roll out AI finance tools, I start small on purpose. In the first 30 days, I pick one workflow (like invoice coding or cash application), one dataset (a clean slice of AP, AR, or GL history), and one metric (cycle time, accuracy, or exception rate). The goal is not “AI everywhere.” It’s a pilot we can measure and explain.

By day 60, I widen the surface area carefully: more entities, more vendors, or one more adjacent step in the process. I keep the same metric so we can see real trend lines, not noise. By day 90, I’m looking for repeatability—can we run this every close, with the same controls, and get the same quality?

Change management that respects finance reality

Adoption fails when people feel replaced or blamed. I plan training like I would for a new ERP feature: short sessions, role-based guides, and clear “what changed” notes. I also create a safe path to say “the model looks wrong” without drama—an exception form, a shared queue, and a rule that raising issues is a positive behavior.

Compliance, audit trails, and approvals

To pass audit review, I log inputs, model version, key prompts or rules, outputs, overrides, and who approved the final posting. I define approval levels up front: what the analyst can accept, what needs manager sign-off, and what must be escalated to controllership.

Operational monitoring: drift, queues, and rollback

After go-live, I monitor drift checks, exception volumes, and reconciliation breaks. If performance slips, I decide fast: retrain when data patterns changed, or rollback when the tool is unstable or controls are at risk.

My closing test is simple: success looks boring—fewer surprises, faster closes, and cleaner cash flow.

TL;DR: If you want AI that helps finance teams (not just demos), start with 3 workflows: real-time forecasting, AI reconciliation for the financial close, and credit risk modeling. Choose tools that are explainable, integrated (Excel/ERP/Salesforce), and auditable. Measure impact with cycle time, forecast accuracy, and error rate—then scale via no-code automation and AI agents with compliance workflows baked in.

Comments

Post a Comment