AI Trends in Financial Services: A CFO’s Notes

I walked into a finance leadership roundtable expecting the usual “AI will change everything” chorus. Instead, the first thing someone said was, “My best bot is the one that never talks to my customers.” That stuck with me. In this outline, I’m riffing on that vibe—what finance leaders say in interviews when the cameras are on, and what they admit during the coffee break: where generative AI helps, where it creates new fraud and compliance headaches, and why “customer experience” is now a risk topic too.

1) The interview moment that changed my AI optimism

I went into “Expert Interview: Finance Leaders Discuss AI” expecting the usual mix of bold predictions and shiny demos. Instead, one comment landed like a quiet reset:

“If it doesn’t move a finance metric, it’s not an AI project—it’s a science fair.”That line made me rethink what’s realistic versus what’s just trendy in AI trends in financial services.

Three themes I kept hearing (in a very specific order)

- Business value first: Leaders kept tying AI to close speed, forecast accuracy, working capital, and audit effort—not “innovation points.”

- Governance second: Not as red tape, but as the way to scale safely: data access, model risk, approvals, and clear ownership.

- Wow-factor last: The flashiest chatbot mattered less than whether it could be trusted on month-end, every month-end.

A quick tangent: the spreadsheet-to-chatbot pipeline

Finance teams default to familiar tools because they already hold the logic of the business. The pattern I heard was simple: start in Excel, then automate, then wrap it in a chat interface. It’s not glamorous, but it’s how financial services AI maturity actually grows.

- Analyst builds a model in a spreadsheet.

- Team standardizes inputs and definitions.

- Automation moves it into a controlled workflow.

- A chatbot becomes the front door, not the engine.

My wild-card analogy

AI in finance feels like adding a new colleague who’s brilliant… and also undocumented. They can draft a variance story in seconds, but you still need to ask: Where did that number come from? If the “colleague” can’t show work, I can’t sign off.

What I’m watching through 2026

- More practical AI trends: reconciliation, invoice exceptions, narrative reporting, and controls testing.

- Stronger governance as a product: policy + tooling + monitoring, not just a PDF.

- The quiet rise of internal AI platforms that keep data, prompts, and permissions inside the firm.

2) Generative AI in Financial Operations: the unsexy wins

In the expert interview with finance leaders, the most useful AI stories were not flashy. They were about shaving days off routine work and making outputs more consistent. That’s where generative AI in financial operations earns trust: in the “unsexy wins” that compound every month.

Intelligent process mapping: where cycle time actually drops

I start by mapping the process steps, handoffs, and “waiting time” inside the work. Then I ask: where do we write, explain, or search? Those are the best targets for generative AI.

- Month-end close: draft task checklists, summarize open items, and turn status notes into a clean close tracker.

- Variance narratives: convert driver analysis into consistent commentary, with links back to the source tables.

- Policy Q&A: answer “what does the policy say?” using approved documents, not memory.

Intelligent automation vs. “just another chatbot”: my 30-day pilot scope

The interview reinforced a simple point: a chatbot that only talks is not automation. In 30 days, I’d scope a pilot that produces a work product and leaves an audit trail.

- Pick one workflow (e.g., variance commentary for two business units).

- Connect to the data platform and document library with role-based access.

- Define inputs/outputs, review steps, and acceptance tests.

- Measure cycle time, rework rate, and reviewer edits.

My cautionary note: hallucinations are less embarrassing than silent calculation errors

I can spot a weird sentence. I may not spot a quiet math mistake buried in a spreadsheet. So I prefer generative AI to draft and explain, while calculations stay in governed models. I also require citations, versioning, and human sign-off for anything external.

Example deliverables I actually want

- Board-ready commentary drafts with consistent structure and tone

- Reconciliation explanations that summarize breaks and likely causes

- Control evidence summaries that package screenshots, logs, and approvals

Small opinion: If it can’t plug into data platforms cleanly, it’s not an AI project—it’s a side quest.

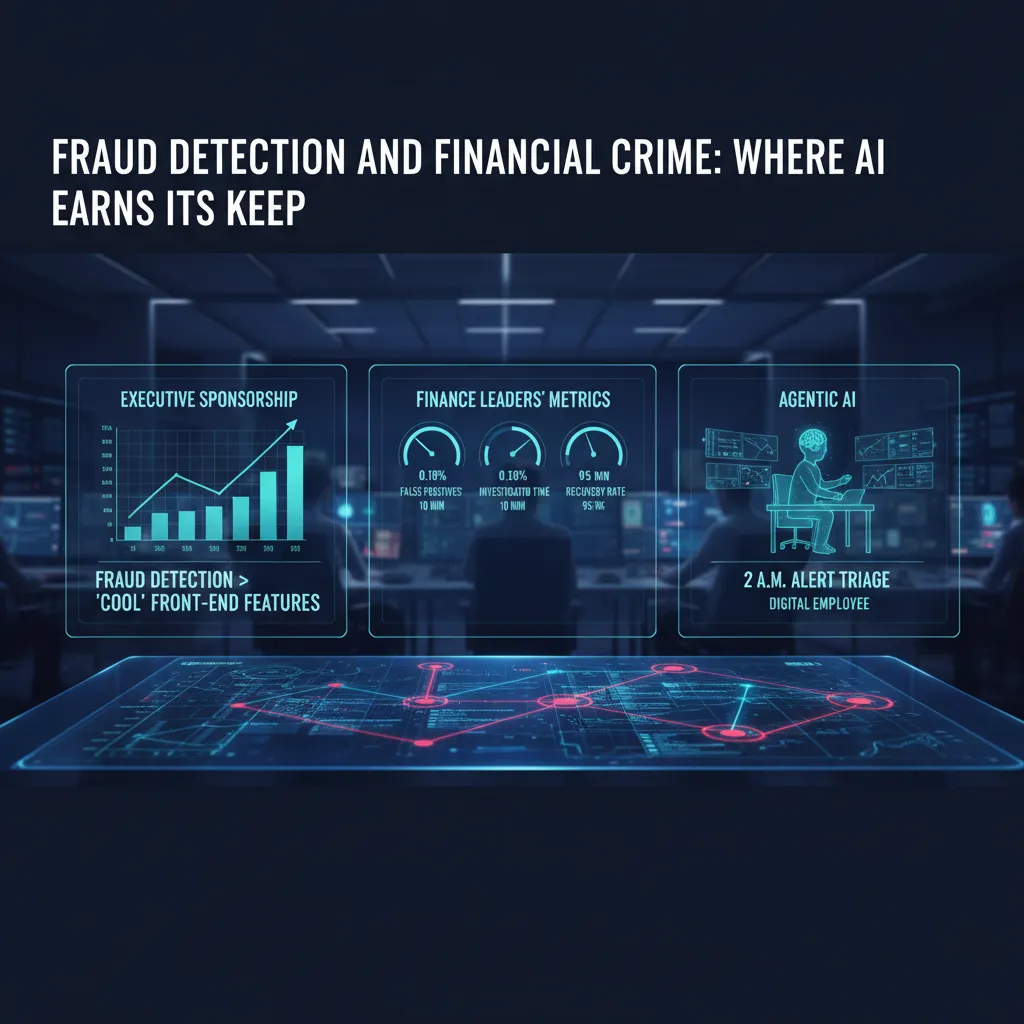

3) Fraud Detection and Financial Crime: where AI earns its keep

In the expert interview with finance leaders, one theme came through clearly: fraud detection AI gets stronger executive sponsorship than “cool” front-end features. As a CFO, I understand why. Fraud and financial crime sit right on the P&L. When AI reduces losses, shortens case cycles, and improves recovery, the value is direct, measurable, and easy to defend in budget reviews.

Why this use case wins sponsorship

Customer-facing AI can feel optional. Fraud detection and AML monitoring are not. They protect revenue, reduce chargebacks, and support compliance. That makes the business case less about “innovation” and more about risk, cost, and control.

Signals I care about (not just “model accuracy”)

In the interview, leaders kept returning to operational metrics. Accuracy is fine, but it does not run the team day to day. I track:

- False positives: how many good customers we flag by mistake

- Investigation time: minutes per alert, and time-to-close per case

- Recovery rates: dollars recovered, not only dollars prevented

- Alert volume: whether AI reduces noise or creates more work

Financial crime meets agentic AI

I can picture a near-term “digital employee” for financial crime: at 2 a.m., it triages alerts, groups related transactions, pulls KYC notes, and drafts a short case summary for the morning shift. It does not “approve” fraud decisions on its own, but it can handle the first pass so humans start with context, not a blank screen.

“If AI can cut the queue and raise the quality of each case file, the team stops drowning in alerts.”

Risk Management sidebar: better detection can add friction

Improved detection often means more step-ups: extra OTPs, ID checks, or transaction holds. That can protect us, but it can also hurt conversion and trust. I want clear thresholds and testing so we do not “solve fraud” by frustrating good customers.

What I’d ask in an expert interview

When you improved fraud detection, which controls did you redesign first—data, process, or people?

4) Regulatory Innovation + Regulatory Compliance: the sandbox isn’t a playground

Regulatory momentum I’m seeing: AI is moving from “pilot” to “policy”

In the expert interview with finance leaders, one theme came through clearly: regulators are no longer treating AI as a side experiment. What used to be a “pilot in one team” is now becoming policy across the firm, and it’s happening quickly. As a CFO, I feel this shift in budget conversations: spend is moving from model building to governance, evidence, and audit-ready controls. If we can’t explain how a model works, how it was trained, and how it is monitored, it won’t survive contact with real customers—or real supervision.

Sandbox innovation: why the FCA supercharged sandbox matters (even outside the UK)

The FCA’s “supercharged” sandbox keeps coming up in innovation talks because it signals a practical approach: test new AI and data-driven services with clearer guardrails. Even if you’re not UK-based, it matters because many financial services firms operate across borders, and sandbox patterns tend to travel. The sandbox is not a playground; it’s a structured way to learn what regulators will ask for later: documentation, controls, and consumer outcomes.

Adaptive compliance: controls that evolve as models change

AI models drift. Data changes. Vendors update. So our compliance design can’t be a one-time checklist. I’m pushing for controls that adapt without freezing delivery:

- Model change logs tied to approvals (what changed, why, impact).

- Ongoing monitoring for bias, performance, and data quality.

- Clear accountability for human review on high-risk decisions.

- Evidence packs that are easy to export for audit and regulators.

Digital identity, DeFi, and green finance: the unexpected trio

In the interview, leaders kept circling back to three areas that keep colliding: digital identity (who is the customer), DeFi (how value moves), and green finance (what we report and prove). AI sits in the middle—screening, scoring, monitoring, and reporting—so the compliance surface area expands fast.

My contrarian take: compliance teams are becoming product teams

My view is simple: compliance is now building “features” like consent flows, explainability screens, and automated controls. Whether they like it or not, compliance teams are becoming product teams—because good regulatory compliance is increasingly something the customer can see and feel.

5) Hyper-Personalised Banking, Voice AI, and the awkward reality of Customer Experiences

Engagement vs. creepiness (and where I draw the line)

In the interview, one theme came through clearly: personalisation works, but it can also feel like surveillance. As a CFO, I like higher conversion and lower churn. As a customer, I don’t want my bank to sound like it’s reading my mind. The boundary, for me, is simple: use data to help, not to hint. “Help” is reminding me a bill is due. “Hint” is referencing a late-night purchase pattern in a way that feels personal.

The stat that made me pause

One leader shared a number that stuck with me: AI personalisation can boost engagement up to 200% and lift lifetime value by 25–35%. Those are big outcomes for retail banking and wealth management. But the same stat is also a warning: if we chase the upside without guardrails, we create distrust that wipes out the gains.

- Set boundaries: limit sensitive categories, avoid “because you did X” messaging.

- Make it opt-in: clear consent, easy settings, no dark patterns.

- Explain the value: “We’re showing this to reduce fees,” not “We noticed…”

Voice AI in finance (and my fear of yelling “agent”)

Voice technology came up as a practical channel: voice biometrics for authentication, faster support, and even portfolio insights in plain language. I see the appeal—hands-free banking is real convenience. Still, I have a very human worry: getting stuck in a loop and having to shout “agent!” at my bank. If we deploy voice AI, we need a fast escape hatch to a person, plus strong fraud controls.

Designing a “calm” customer experience

The best AI customer experience is often quieter: fewer notifications, better timing, and clearer language. I like rules such as:

- Notify only when the customer can act.

- Use short, neutral wording.

- Bundle updates instead of spamming.

A tiny story: respectful fraud prevention

My best recent experience was a proactive fraud check. The message was calm: “We noticed unusual activity. Was this you?” Two buttons. No blame. No drama. That’s the standard I want for hyper-personalised banking: protective, not accusatory.

6) Agentic AI and Digital Employees: my favorite scary idea

What “agentic AI” means in plain English

In the expert interview with finance leaders, one theme stuck with me: the next wave is not AI that just writes or summarizes. It is AI that takes actions. Agentic AI can read a policy, check a system, decide a next step, and then do it—open a ticket, send an email, update a forecast, or trigger a workflow. That is powerful, and it is also where risk shows up fast.

Digital Employees on the finance org chart

I think of “Digital Employees” as named agents with a job description. In my org chart, they would “report” to a human process owner (like AP, FP&A, or Treasury) and be governed by Finance Ops and IT security.

- How I’d measure them: cycle time, error rate, policy exceptions, audit trail quality, and dollars recovered or protected.

- How I’d manage them: access rights, approval limits, and clear escalation rules.

Competitive advantage vs. operational chaos

Autonomy can create speed: fewer handoffs, faster close, better cash collection. But autonomy can also break controls. If an agent can change vendor master data, approve credits, or move cash, then one bad prompt, one wrong integration, or one unclear policy becomes a real financial event. As one leader implied in the interview, the goal is not “maximum autonomy,” it is controlled autonomy.

Portfolio management and planning: where I allow autonomy

I’d allow more agentic AI in planning tasks that are reversible and explainable: scenario modeling, variance commentary drafts, and rolling forecast updates with human sign-off. I would not allow autonomy in payments, revenue recognition decisions, or anything that changes customer pricing without approvals.

Hypothetical: invoice dispute negotiation

Imagine an agent that negotiates invoice disputes. It checks contract terms, pulls delivery proof, and proposes a settlement. Great—until it “helps” by offering a 10% discount to the wrong customer tier. Now we have margin leakage, precedent risk, and a messy customer conversation. That is why every agent needs guardrails like:

- pre-approved discount bands,

- customer segmentation rules,

- and a required human approval above a threshold.

7) Conclusion: the AI-Powered Future is a governance story (with receipts)

After listening to finance leaders in the Expert Interview: Finance Leaders Discuss AI, I’m convinced the “AI future” in financial services is not a single tool or model. It’s a governance story—one we will have to prove with receipts. The threads connect clearly: generative AI is about productivity (faster drafts, faster analysis, faster close support), fraud detection is about protection (spotting patterns humans miss), personalisation is about growth (better offers, better service, better retention), and regulatory innovation is about permission (earning the right to scale).

My final opinion is simple: Responsible AI is the only way to scale without a reputational hangover. The leaders I heard were not chasing hype; they were building confidence. They talked about making AI useful, but also making it explainable, monitored, and safe enough to survive real scrutiny—from customers, regulators, and our own boards.

If I could steal a practical checklist from these finance leaders, it would be this: start with data platforms that are clean and well-owned, add controls that look like finance controls (access, approvals, testing, monitoring), invest in talent that can translate between finance, risk, and tech, and insist on a “kill switch”—a clear way to pause or roll back an AI feature when it behaves badly. In my world, “we can’t turn it off” is not an acceptable answer.

One wild card I can’t stop thinking about: imagine your next audit includes a conversation log review. Not just model outputs, but prompts, user behavior, and how decisions were influenced. Are we ready to show what was asked, what was answered, what was used, and what was ignored? If not, governance is still a slide deck, not an operating system.

I’m cautiously optimistic. AI can make finance faster and smarter—but I’m keeping my questions sharp, because the winners won’t just deploy AI. They’ll govern it.

TL;DR: Finance leaders expect an AI-powered future by 2026: more internal AI platforms, faster fraud detection, hyper-personalised banking, and voice AI—if responsible AI, regulatory compliance, and data platforms keep up. Agentic AI is the wildcard: huge upside, sharp governance edges.

Comments

Post a Comment