AI Predictions 2026: Product News I’m Watching

Last week I opened my notes app to clip “one quick AI update” and, forty minutes later, I’d built an accidental bingo card: agents doing work, robots getting jobs, ads being generated end-to-end, and Google trying to make its AI products feel like one coherent room instead of five noisy hallways. This outline is my attempt to turn that messy pile into something you can actually use—while keeping the weird little details that make product AI news worth following in the first place.

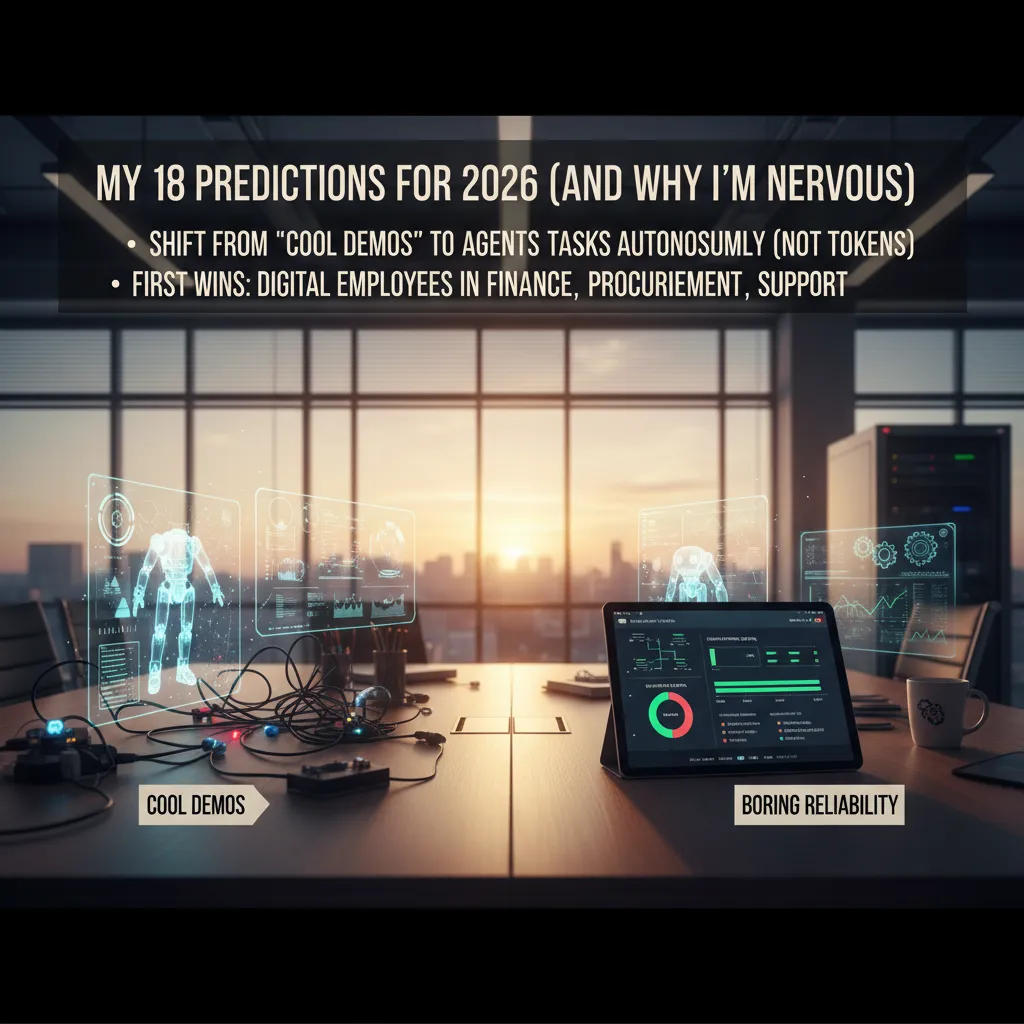

1) My 18 Predictions for 2026 (and why I’m nervous)

From the latest Product AI News updates and releases, I’m tracking a clear shift from “cool demos” to boring reliability—the kind that survives a Monday morning outage. That’s the part that makes me nervous: reliability is harder than novelty, and it forces teams to ship less magic and more plumbing.

The headline metric I keep repeating in meetings is simple: AI agents completing tasks autonomously (not tokens, not model size, not benchmark scores). If an agent can’t finish a real workflow end-to-end—under messy permissions, missing data, and changing priorities—it’s not a product yet.

My 18 predictions I’m watching

- Reliability becomes the main differentiator.

- “Agent success rate” replaces token bragging.

- Ops teams adopt agents first (finance, procurement, support).

- Agents get stronger audit logs by default.

- More human-in-the-loop checkpoints for risky actions.

- Permissioning becomes a product category.

- Tool-use libraries standardize across vendors.

- Agents learn company policy, not just prompts.

- Better fallback behavior during outages.

- More on-prem and private deployments for regulated teams.

- Procurement agents compare vendors faster than humans.

- Support agents resolve more tickets without escalation.

- Finance agents reconcile invoices with fewer errors.

- Calendars become the real “agent UI.”

- AI product news matters only if it changes my schedule.

- Teams measure time saved per workflow, not per chat.

- More “digital employee” roles appear in org charts.

- Wild card: a “2026 office” where agents book vendors, negotiate terms, and hand me a clean summary before lunch.

My quick test for product AI news: does it change my calendar, or just my Twitter feed?

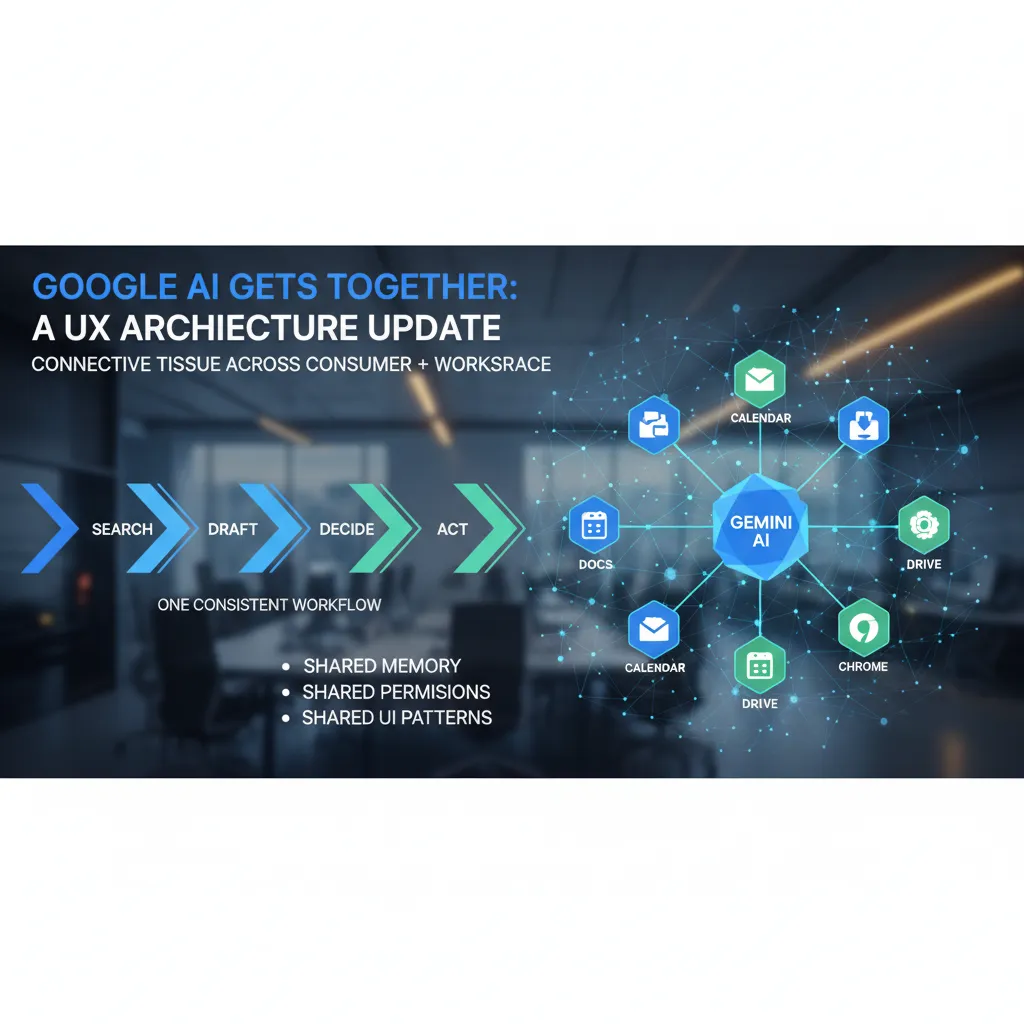

2) Google AI Gets Together: a UX architecture update I’ve wanted

In the latest Product AI News: Latest Updates and Releases, the Google thread I keep watching is simple: can Google AI finally feel like one product? I want one consistent workflow—search → draft → decide → act—instead of five overlapping tools that each do 80% of the job and then hand me off to another tab.

What a “Google AI architecture update” means in product terms

When I say “architecture,” I’m not talking about model benchmarks. I mean the UX plumbing that makes AI usable across Google products:

- Shared memory: the assistant remembers what matters (with clear controls), so I don’t re-explain context in every app.

- Shared permissions: one place to manage what Gemini can access in Drive, Gmail, Calendar, and beyond.

- Shared UI patterns: the same prompt box, citations, and action buttons everywhere, so teammates don’t need retraining.

Gemini as connective tissue across consumer + workspace

If Google Gemini AI becomes the connective layer, I expect it to bridge consumer moments (Search, Android, Photos) with work moments (Docs, Sheets, Meet). The win is not “more AI features.” The win is fewer decisions about where to start, and more confidence that the output can turn into an action—create a doc, schedule a meeting, file a ticket—without copy/paste.

My quick test: explain data flow in one sentence

Can a non-technical teammate explain where their data goes in one sentence?

If the answer is fuzzy, the product isn’t ready for broad adoption. I’m looking for plain-language permission prompts and a simple data map.

Small aside: I’ve seen consolidation efforts fail when branding wins over usability—new names, new icons, same mess. I’m hoping Google resists that and ships a real Google AI UX architecture update, not just a re-label.

3) AI Agents Year 2026: from copilots to coworkers

In the latest Product AI News updates I’m tracking, the biggest leap isn’t “better chat.” It’s multi-agent systems that can collaborate and keep working without me babysitting the prompts. I’m watching products move from single copilots to small teams of agents that plan, delegate, and verify each other’s work.

The leap I’m watching: agents that coordinate

The pattern I see: one agent breaks a job into steps, a second agent checks policy and risk, and a third agent executes in tools. When this works, it feels less like prompting and more like assigning tasks.

Where multi-agent systems land in the enterprise

Enterprise AI agents are showing up in places with repeatable workflows and clear handoffs:

- Approvals: routing requests, collecting context, and preparing decision summaries

- Procurement: vendor comparisons, contract clause checks, renewal reminders

- Support triage: tagging, prioritizing, drafting replies, escalating with evidence

- Supply chain automation: monitoring exceptions, updating ETAs, triggering reorder steps

Safeguards I actually use

My practical checklist for deploying AI agents is simple:

- Scoped tools (least privilege; no “god mode” access)

- Audit logs (every action, input, and output recorded)

- Rate limits (to prevent runaway loops and surprise bills)

- Human kill switch (one click to pause or revoke credentials)

My slightly contrarian take

The first great agent product will feel boring—like email rules, but smarter.

Example I’m watching for: an agent that reconciles invoices, flags anomalies, and pings a second agent to negotiate vendor credits—then routes the final recommendation for approval.

4) World Simulation Models: why AI video generation suddenly matters

I used to treat AI video generation as marketing candy: fun demos, flashy clips, and not much more. But while tracking product AI news lately, I’ve changed my mind. Now it looks like a serious training ground for world simulation models—systems that help AI predict what might happen next in a scene.

What clicked for me: “useful” beats “perfect”

The big shift was realizing that simulators don’t have to be perfect. They have to be useful for planning and safety testing. If a model can generate many “what if” futures—slippery floors, blocked paths, weird lighting—it can help an AI agent practice decisions before it ever touches real hardware.

The namedrops I keep hearing

Two names keep coming up in conversations and release cycles: OpenAI Sora and DeepMind Genie 3. I’m watching them less as “video tools” and more as early signs of a broader shift: video generation as a way to build and test internal physics, cause-and-effect, and action outcomes.

Why this matters for products (not just demos)

This connects directly to the kind of Product AI News I care about: better simulations → better robotics → better autonomy in messy environments. When an AI can rehearse in a simulated world, teams can iterate faster, test edge cases, and reduce real-world trial-and-error.

- Robotics: safer training before deployment

- Autonomy: better handling of unpredictable scenes

- Operations: faster testing without expensive field runs

It’s like giving AI a cheap, fast rehearsal studio before the real stage.

5) Physical AI Brain/Body: robots, factories, and the Atlas moment

Physical AI is the point where software stops being polite and starts moving furniture. In the recent Product AI News updates I’m watching, the theme is clear: models are getting “bodies,” and that changes what product news even means. A new chatbot feature is easy to roll back. A new robot behavior can dent a pallet rack.

The Atlas moment: “oh wow” goes industrial

Boston Dynamics’ Atlas moving toward being production-ready is the specific release I can picture landing in a real factory. Not as a demo video, but as a line item in a deployment plan: shift coverage, uptime targets, spare parts, and training. That’s the moment physical AI stops being a lab story and becomes an operations story.

The “brain upgrade” is bigger than one robot

The Gemini brain upgrade angle—Google and DeepMind pushing robotics intelligence—matters more than any single machine. If a shared “robot brain” improves quickly, the same gains can spread across fleets: arms, mobile bases, warehouse pickers, and inspection bots. I’m watching for product releases that look like:

- Better perception in messy, changing spaces

- More reliable planning for multi-step tasks

- Safer human-robot interaction without slowing work to a crawl

Autonomous robot factories: what really changes

When robots become more autonomous, the big shift isn’t just headcount. It’s safety, maintenance, and job design. I expect more “factory OS” features: audit logs, incident replay, and clear limits on what a robot can do.

Physical failure is loud, expensive, and sometimes dangerous.

Personally, I’m equal parts excited and wary. The upside is huge productivity. The downside is that mistakes don’t stay on a screen—they happen in the aisle.

6) Generative AI Ad Creation: the marketing shift I didn’t ask for

One product update I can’t ignore in Product AI News: Latest Updates and Releases is the push toward fully automated ad creation. Meta’s plan sounds simple: upload a product image + set a budget → AI generates and runs the campaign. From a workflow view, it’s clean. From a human view, it feels like a switch got flipped.

As a consumer, I’m bracing for “perfectly okay” ads

If every brand can generate endless variations, I expect a flood of ads that are polished, on-brand-ish, and oddly similar. Not terrible—just everywhere. The internet already has too much noise, and generative AI ad creation could make that noise cheaper to produce.

As a builder, I’m watching the tooling wars

On the product side, I’m watching which platforms win the “AI campaign manager” layer: creative generation, audience targeting, budget pacing, and reporting in one loop. The best tools won’t just make images and copy; they’ll make decisions, then explain them in plain language.

Targeting gets weird when the model optimizes too well

AI ads targeting raises awkward questions: who is accountable when the model optimizes too well? If the system finds a sensitive audience segment, or learns that fear-based wording converts, is that on the advertiser, the platform, or the model? “The algorithm did it” won’t be a good answer in 2026.

What I’d do: a small playbook

- Start with strict brand guardrails: banned claims, tone rules, required disclaimers.

- Limit degrees of freedom: a few templates, fixed offers, tight audience rules.

- Expand slowly: let AI test headlines first, then visuals, then landing page variants.

- Review weekly: keep a human approval step for top-spend ads.

Wild card

I can picture a tiny brand in 2026 running thousands of ad variants like a lab experiment—each one small, measurable, and disposable. That’s powerful, and a little unsettling.

7) AI Research Lab Assistant + CES 2026 health gadgets (a hopeful detour)

In the stream of Product AI News: Latest Updates and Releases, the storyline that keeps me optimistic is the AI research lab assistant. Not because it sounds flashy, but because it points to faster science and better collaboration. If 2026 is the year we stop treating AI like a chat box and start treating it like lab infrastructure, I’m in.

How I picture an AI research lab assistant

I imagine a system that lives inside the tools researchers already use: protocols, instruments, and shared docs. It would suggest experiments based on prior results, help run parts of a workflow, and keep a clean lab notebook trail that is easy to audit later.

- Experiment suggestions: proposes controls, sample sizes, and next-step tests

- Workflow help: schedules runs, checks reagent inventory, flags conflicts

- Notebook integrity: timestamps changes and links data to methods

CES 2026 healthcare innovation I’m watching

On the consumer side, CES 2026 health gadgets feel like they are moving from “fitness toys” to real monitoring tools. I’m watching for 360° body scanning mirrors and high-tech scales that track trends over time, not just single readings. If these devices can explain uncertainty and avoid false alarms, they could help people notice changes earlier and share better context with clinicians.

A tiny, human worry

Here’s my concern: when the lab assistant is “right,” will junior researchers learn less? If the tool always picks the best experiment, beginners may skip the messy thinking that builds intuition.

My rule of thumb: tools should increase curiosity, not just throughput.

Conclusion: the product AI news filter I’m using now

After tracking Product AI News week after week, I’ve stopped asking “Is this model bigger?” and started asking one simple question: does this update reduce real friction, or just add novelty? If it doesn’t save time, cut steps, lower risk, or make a team more confident in the output, I treat it as noise—even if the demo looks great.

With that filter, I’m watching three convergence points that keep showing up across releases and roadmaps. First is agents + unified UX: tools are moving from single features to end-to-end flows, where an agent can plan, act, and report inside one clean interface. Second is simulation + robotics: better synthetic training, safer testing, and faster iteration are turning “robotics progress” into something product teams can actually ship. Third is ads + measurement: as AI changes creative and targeting, the real battle is proving lift, attribution, and trust in the numbers.

Next week, I’m keeping it practical. I’ll pick one agent workflow I can run daily (not ten), define a short set of enterprise AI metrics that leadership will accept, and run a small pilot with clear before-and-after results. My goal is to learn what breaks in the real world: permissions, data quality, handoffs, and the human review step that never goes away.

One last imperfect aside: I miss when “AI news” was mostly model releases. Now it’s strategy, labor, and trust—who owns the workflow, who gets displaced, and who is accountable when the system is wrong.

That’s why I keep coming back to Product AI News. The releases that matter are the ones that change how work gets done, not just how impressive the tech sounds.

TL;DR: 2026 looks like the year AI stops being measured by tokens and starts being judged by tasks completed autonomously. Multi-agent systems, Google Gemini AI consolidation, world model simulation via video generation, Meta’s AI ads targeting, and production-ready Physical AI robots like Boston Dynamics Atlas are the product threads to watch—and to pilot early with clear enterprise AI metrics.

Comments

Post a Comment