AI News Trends 2025–2026: Agents, Edge, Reality

Last fall I caught myself doom-scrolling AI news at 1:12 a.m., convinced I’d missed “the next big thing” because three different threads said three different futures were inevitable. The morning after, I did what I always do when the headlines get too loud: I made a messy spreadsheet. Not a perfect one—more like a kitchen-table map of what keeps repeating across sources, demos, and real deployments. Here’s what’s changing in AI news from 2025 into 2026, the parts I think will stick, and the parts I’m politely side-eyeing.

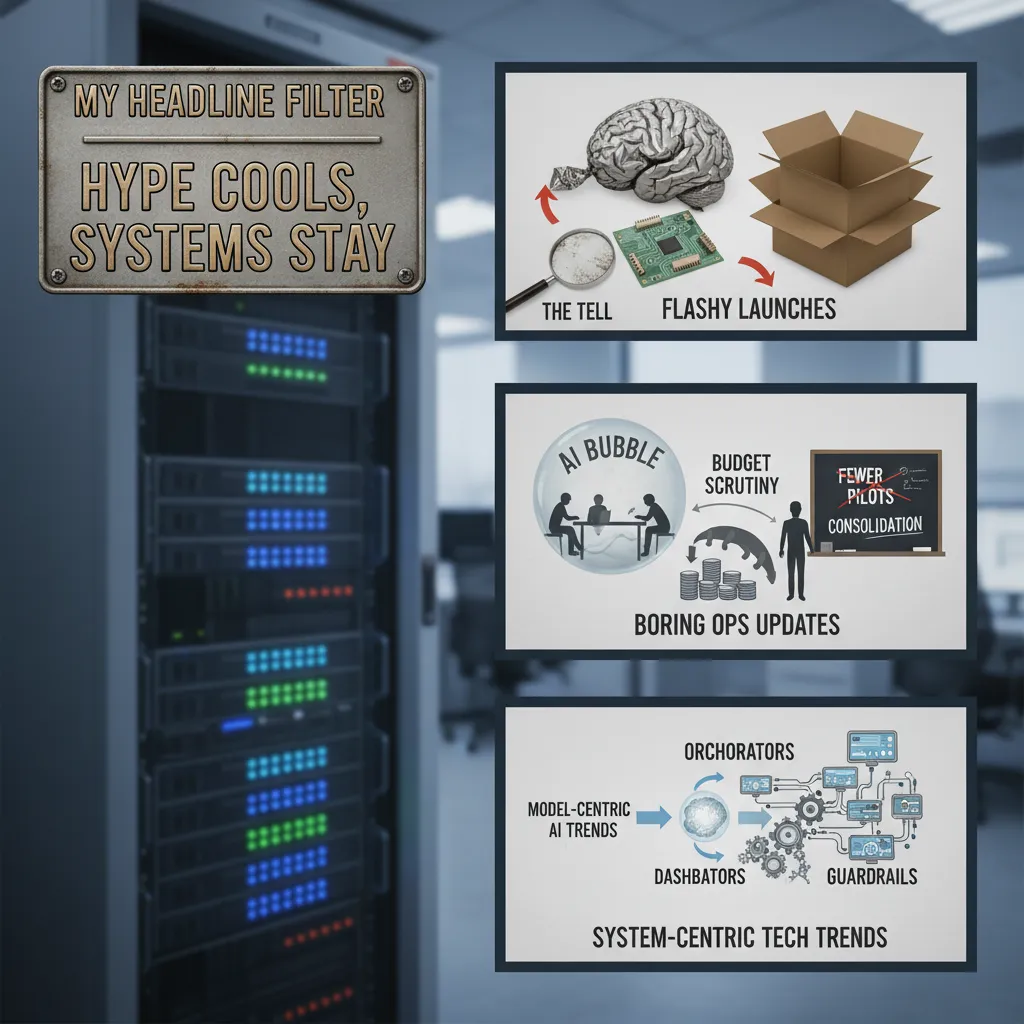

1) My “headline filter”: hype cools, systems stay

When I scan AI news in 2025–2026, I’m paying less attention to flashy model launches and more attention to boring ops updates. New features are fun, but the “tell” is usually in the release notes: uptime, cost controls, audit logs, role-based access, and how teams actually ship changes without breaking production. Those details don’t trend on social, yet they decide whether AI becomes a real tool or a recurring fire drill.

Why “boring” updates matter more than big announcements

- Ops changes show intent: vendors invest in what customers demand day-to-day.

- Reliability beats novelty: a stable workflow outperforms a smarter demo.

- Compliance is the new feature: logging, redaction, and approvals are now table stakes.

AI bubble deflation: what it looks like inside an org

If the AI bubble cools, it won’t feel like a crash. It will feel like procurement. In my experience, deflation shows up as tighter questions and fewer “just try it” experiments.

- Budget scrutiny: “What’s the payback period?” replaces “What’s possible?”

- Fewer pilots: teams stop running five small proofs and pick one path.

- More consolidation: one platform, fewer tools, shared governance.

From model-centric to system-centric AI trends

The trend I trust most is the shift from “Which model?” to “Which system?” That means more focus on:

- Orchestrators that route tasks across tools and agents

- Dashboards that track cost, latency, quality, and usage

- Guardrails like policy checks, safe prompts, and human approvals

In other words, the winning teams treat AI like software operations, not magic.

A tiny tangent: when killing a chatbot was a win

One day my team shut down a chatbot project after weeks of testing. The model was “good,” but the workflow was wrong: users needed a clear intake form, a searchable knowledge base, and a handoff to support—not a chat window. We kept the retrieval pipeline, added a simple triage screen, and cut ticket time anyway. We killed the bot and shipped the system.

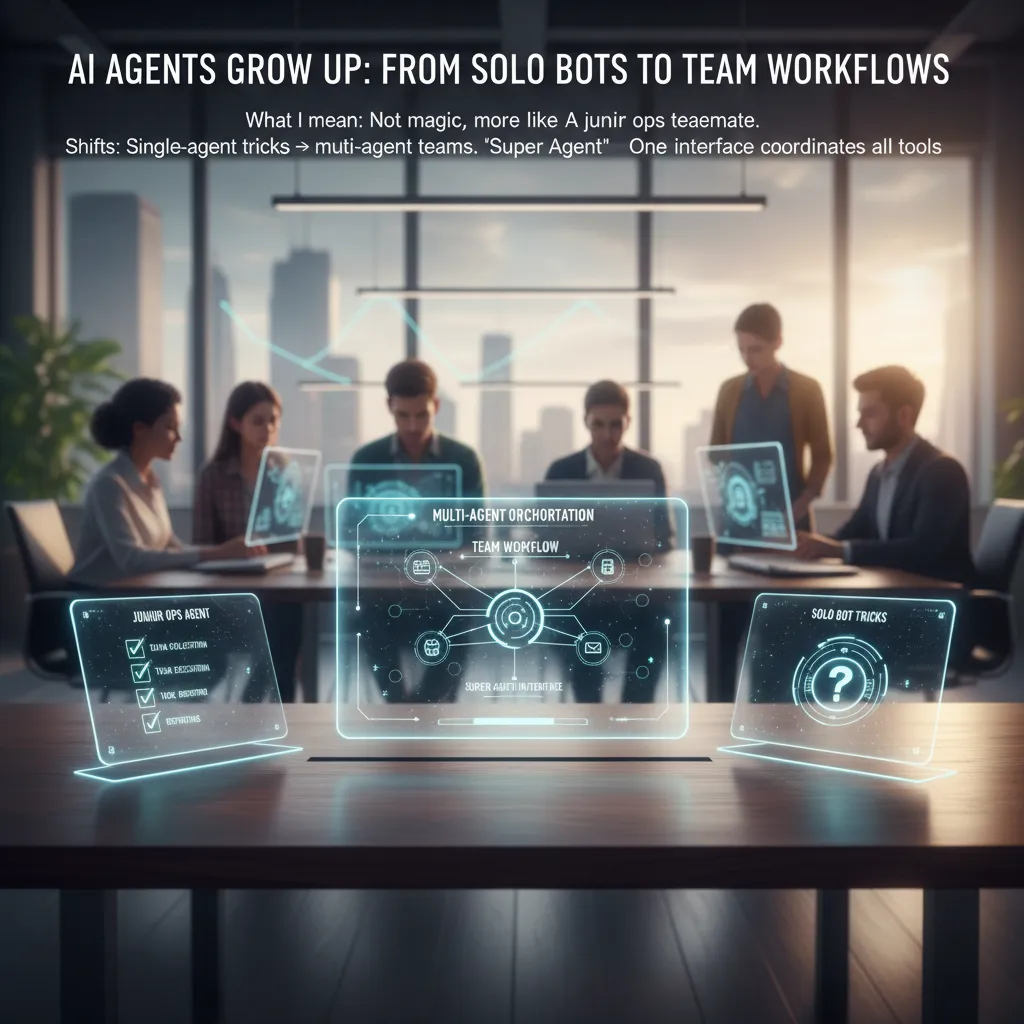

2) AI agents grow up: from solo bots to team workflows

What I mean by “AI agents” (and what I do not mean)

When I say AI agents, I don’t mean magic robots that “understand everything.” In the 2025–2026 AI news cycle, agents are better described as a junior ops teammate who follows checklists: read a request, gather context, take a few tool actions, and report back. They can be helpful, but they still make mistakes, and they still need clear rules.

In practice, an agent is a workflow runner with language skills—not a mind.

Agentic AI shifts: from single tricks to team workflows

Earlier “agent” demos often showed one bot doing one impressive task. What’s changing in AI News Trends 2025–2026 is the move toward multi-agent orchestration: several specialized agents working together, tracked in dashboards. One agent drafts, another checks policy, another pulls numbers, and a final one prepares a handoff for a human.

- Planner agent: breaks work into steps

- Tool agent: runs searches, updates docs, files tickets

- Reviewer agent: checks style, risk, and missing info

- Reporter agent: summarizes outcomes and next actions

The “super agent” interface: exciting and slightly terrifying

I keep seeing the “super agent” idea: one chat-like interface that coordinates my calendar, docs, email, CRM, and ticketing system. It’s exciting because it reduces tab-switching and turns requests into actions. It’s also terrifying because one interface can become a single point of failure. If it misreads intent, it can schedule the wrong meeting, edit the wrong doc, or close the wrong ticket—fast.

Where safeguards show up (especially for high-value work)

As agents touch real systems, safeguards become the headline feature. For high-stakes workflows like demand forecasting, I expect more:

- Approvals before writes (send, purchase, publish)

- Sandboxing to test actions safely

- Audit trails so every step is traceable

- Stop buttons to pause or roll back runs

In other words, the agent trend is not just “smarter bots,” but managed automation that teams can monitor, control, and trust.

3) Open source gets sharper (and more specific)

When I track AI news trends 2025–2026, I notice open-source AI is no longer framed as “free models you can download.” The real story is getting sharper: governance, repeatable fine-tuning recipes, and community QA that is happening in public—often under pressure from security teams, regulators, and enterprise buyers.

Open source is becoming a full stack, not a single release

In practice, I’m seeing more attention on the parts that make a model usable and safe:

- Licensing clarity (what you can deploy, modify, and resell)

- Data and training notes (what was used, what was excluded)

- Evaluation harnesses and red-team reports

- Fine-tuning guides that teams can reproduce, not just “tips”

Smaller domain models + multimodal reasoning

I’m also seeing fewer “one model to rule them all” headlines. Instead, open-source momentum is shifting toward smaller, domain-focused models that can be tuned for a job: customer support, code review, compliance search, or internal knowledge. At the same time, multimodal reasoning (text + images, and sometimes audio) is becoming more normal, which pushes communities to publish better test sets and clearer failure modes.

IBM Granite as an enterprise-friendly signal

A concrete example I keep coming back to is IBM Granite. To me, Granite models signal where enterprise-friendly open source is heading: practical sizes, clearer usage terms, and a focus on deployment realities like governance and evaluation. It’s less about hype and more about “Can my team ship this safely?”

Global diversification changes the map

Finally, the competitive map is widening. Chinese releases are adding pace and variety, while hardened open-source governance (stronger policies, more formal review, tighter security practices) is changing what I bookmark and trust. My reading list now includes not just model cards, but also governance docs, benchmark repos, and community issue trackers—because that’s where the real quality signals show up.

4) AI infrastructure and the hardware race (the quiet main character)

When I look at AI news trends in 2025–2026, the loud headlines are about agents and new models. But the quiet main character is still infrastructure. GPUs run the show today because they are flexible, widely supported, and already deployed at scale. Still, 2026 feels like the year when alternatives stop sounding like science projects and start sounding like procurement plans.

GPUs stay central, but the “only option” story fades

In practice, most teams I follow still train and serve on GPUs. The difference is that more workloads are being split: GPUs for training and fast iteration, and other accelerators for steady, high-volume inference where cost matters.

What’s maturing: ASICs, chiplets, and analog inference

Three hardware directions keep showing up in AI infrastructure conversations:

- ASIC accelerators: purpose-built chips that can run common model operations with better performance per watt.

- Chiplet designs: mixing smaller chip parts like building blocks, which can improve yields and speed up new product cycles.

- Analog inference: using analog compute ideas to reduce energy for certain inference tasks, especially where latency and power are tight.

Why it matters: as AI agents and always-on assistants grow, the bill is not just compute—it’s power, cooling, and utilization. Better efficiency changes unit economics, which changes what products can exist.

From “server rooms” to “superfactories”

I increasingly picture AI infrastructure as dense, industrial systems: racks designed like production lines, with power delivery, liquid cooling, and networking treated as first-class constraints. At the same time, infrastructure becomes more distributed—some inference moves closer to users (edge and on-prem), while training concentrates in massive clusters.

Where PyTorch and interoperable frameworks fit

As stacks diversify, portability becomes the survival skill. PyTorch remains a key layer for research-to-production flow, but I also watch for interoperable paths like torch.compile, ONNX-style exports, and vendor runtimes that keep models and agents movable across GPUs, ASICs, and edge devices.

5) Edge AI gets real—and sovereignty stops being theoretical

In 2025–2026, I’m seeing Edge AI shift from “someday” to “we shipped it.” The driver is not hype—it’s the boring stuff that matters: latency, privacy, and cost. If a camera needs to flag a safety issue in under 100ms, or a clinic can’t send sensitive data off-site, the edge stops being optional. Even in retail and logistics, pushing more inference on-device can cut cloud bills and reduce network load.

Sovereignty is now a line item, not a panel topic

What surprised me most is how AI sovereignty shows up in procurement language. Buyers ask where data is stored, where models are hosted, who can access logs, and what happens during audits. That changes architecture fast: regional deployments, separate tenants, local key management, and sometimes fully offline modes. It’s not just “use a compliant cloud”—it’s “prove control over data, models, and updates.”

“Keep data in-region, keep control in-house, and keep the system useful when the network is shaky.”

A practical checklist I’ve used: edge vs. cloud

When I plan an edge AI rollout, I start with a simple split: what must run on-device versus what can stay in the cloud. Here’s the checklist I use:

- Latency: If the action is real-time (safety stop, fraud block), run it on-device.

- Privacy: If raw data is sensitive (faces, health, trade secrets), process locally and send only summaries.

- Cost: If you stream high-volume data 24/7, edge inference is often cheaper.

- Reliability: If the site can’t depend on internet, edge needs a “degraded but useful” mode.

- Model updates: If updates must be controlled, use signed packages and staged rollouts.

Wild-card scenario: 6 hours offline

I like to test teams with this: your factory floor loses internet for 6 hours. Does your “AI agent” still help—routing work orders, spotting defects, answering SOP questions—or does it just complain it can’t reach the cloud? If the answer is the latter, edge AI isn’t real yet; it’s just a thin client with a fancy name.

6) Physical AI + robotics: the plot twist after LLM plateaus

I’m seeing a clear shift in the 2025–2026 AI news cycle: when big LLM scaling starts to feel like it has diminishing returns, attention moves to what models can do, not just what they can say. That’s why physical AI—systems that sense, plan, and act in the real world—suddenly looks like the next growth curve. In “AI News Trends 2025-2026: What’s Changing,” the underlying theme is simple: capability becomes more visible when it touches reality.

Why physical AI is rising now

Text-only progress is harder to “feel” after a point. But embodiment creates clear metrics: did the robot pick the item, avoid the person, and finish the task on time? As LLMs plateau, the spotlight moves to action loops: perception → decision → motion → feedback. That loop is where real value (and real risk) lives.

The robotics “pickup” that suddenly looks headline-worthy

Most robotics progress is not flashy. It’s grippers that don’t drop things, perception that works in bad lighting, and safety systems that fail gracefully. Yet these “boring” upgrades compound. When enough of them stack, you get a step-change: robots that can operate in warehouses, hospitals, kitchens, and labs with fewer resets.

- Grippers: better contact sensing and softer materials for messy objects

- Perception: stronger 3D understanding and tracking in clutter

- Safety: tighter constraints, better monitoring, clearer stop conditions

How AI research changes: science as a workflow

I also expect research to look more like an automated pipeline. Models propose hypotheses, write experiment plans, run simulations, and summarize results. The “breakthrough” becomes a repeatable loop, closer to continuous integration than a single eureka moment.

“The next leap won’t be a smarter chat. It will be a system that can test, measure, and improve in the real world.”

My contrarian bet

The next “wow” demo might be a robot doing a boring job flawlessly for 30 days: stocking shelves, sorting returns, wiping tables, or moving lab samples—no viral tricks, just uptime.

Conclusion: My 2026 reading list (and a gentler way to watch AI news)

When I look back at the biggest AI news trends from 2025 into 2026—agents, new infrastructure, edge deployments, and “physical AI” in the real world—the throughline I keep seeing is reliability. The winners are not the flashiest demos. They are the teams that treat AI like a system: data, models, tools, safety checks, latency, cost, and human oversight all working together. In other words, these trends reward systems thinking, not hype chasing.

To keep myself informed without feeling overwhelmed, I use a simple weekly routine. I don’t try to read everything. I pick one infrastructure story (chips, networking, AI factories, cloud pricing), one open-source release (models, eval tools, agent frameworks), one agent platform update (orchestration, tool use, memory, governance), and one edge case study (on-device assistants, retail, factories, hospitals, vehicles). This mix keeps my “AI news diet” balanced and helps me connect headlines to real constraints like uptime, security, and total cost.

For 2026, I’m watching three things closely. First, standards for agentic systems: common ways to define tool permissions, audit trails, and evaluation so agents can be trusted in production. Second, more honest AI factory ROI stories: not just “we bought GPUs,” but what changed in throughput, quality, and unit economics. Third, AI sovereignty becoming the default: more companies and governments will want local control over data, models, and compute, even if it costs more.

AI news is weather; your product strategy is climate—stop packing for every cloud.

That line is how I stay calm. I can notice storms—new agent releases, edge breakthroughs, policy shifts—without rebuilding my roadmap every week. If I keep my focus on reliability, I can learn from the weather while still designing for the climate.

TL;DR: AI news in 2025–2026 shifts from bigger models to better systems: team-based AI agents, practical edge AI, open-source domain multimodal reasoning models (e.g., IBM Granite), and denser AI infrastructure (“AI factories”). Expect hype to cool, governance to harden, and physical AI/robotics to rise as LLM scaling hits diminishing returns.

Comments

Post a Comment