AI Marketing Trends 2026: Notes From a Leader Chat

I still remember the first time an exec asked me, dead serious, “Can we just… have the AI do marketing?” It was said the way someone asks if the dishwasher can also fold laundry. That question popped back into my head while listening to marketing leaders swap stories about what’s working with AI—and what’s quietly breaking. This post is my stitched-together notebook: the uncomfortable stats, the ‘wait, that’s actually smart’ moments, and a few opinions I’m willing to put my name on.

1) AI Marketing Trends 2026 feel like a new ‘front page’

In the leader chat I kept coming back to one idea: AI answers are becoming the homepage—even when you never built one. In “Expert Interview: Marketing Leaders Discuss AIundefined,” the tone wasn’t hype. It was practical. Leaders talked like they’re already living in a world where the first impression of a brand happens inside a generative engine, not on a landing page.

AI answers are the new homepage (even if you didn’t design it)

When someone asks an AI tool “What’s the best option for X?” the tool often replies with a neat summary, a shortlist, and a confident tone. That summary is effectively your new front page. It may pull from your site, reviews, forums, product listings, press, and third-party write-ups. The hard part: you don’t control the layout, and you may not even get the click.

Organic visibility shifts when engines summarize instead of sending clicks

My biggest takeaway from the chatter: I’m planning for fewer visits, not “better headlines.” If the AI gives the answer, the user’s job is done. So the goal shifts from “win the click” to “win the mention” and “be the trusted source.”

- Visibility becomes: being cited, summarized, or recommended.

- Conversion becomes: happening later, across channels, after the AI introduction.

- Measurement becomes: tracking brand lift, direct traffic, and assisted conversions more than raw organic sessions.

“If the answer is on the results page, the results page is the product.”

A mini thought experiment I’m using in planning

I’ve started asking teams a simple question: If your website vanished for a week, what would AI still know about you? That question forces clarity on what’s actually “out there” in the public record.

- Would AI still know what you sell, who it’s for, and why it’s different?

- Would it find consistent pricing, policies, and product details?

- Would reviews and third-party mentions support your claims?

If the answers are weak, I don’t just rewrite pages. I improve the signals: structured product info, credible comparisons, customer proof, and clear positioning repeated across places AI systems learn from.

The weird comfort: we’ve seen distribution cliffs before

There’s a strange comfort here. This isn’t the first time marketing distribution changed overnight (hello, social reach cliffs). But the leaders agreed it may be the fastest shift yet—because the “front page” can change with a model update, not a redesign.

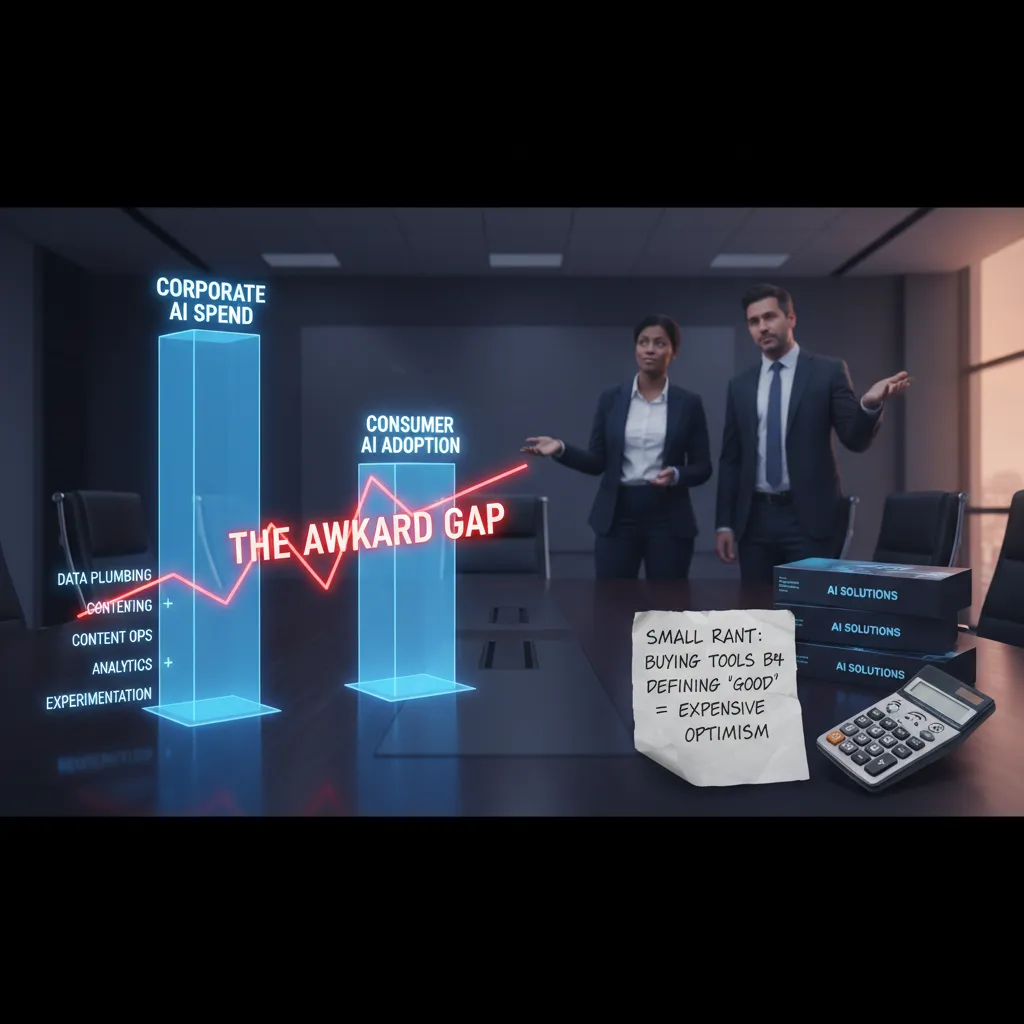

2) Corporate AI Spend vs. Consumer AI Adoption: the awkward gap

In the leader chat, I noticed a tension that kept coming up: executives sounded very confident about spending on AI, even while admitting that many customers still aren’t fully AI-native. That gap matters for marketers. If our audience is still learning what AI is (or doesn’t trust it yet), then “more AI” isn’t automatically “better marketing.” It can even create friction—like when a chatbot replaces a simple support flow and customers feel trapped.

What I took from the conversation is that the spend is real, but the adoption curve is uneven. Some consumers use AI daily; others avoid it, don’t understand it, or worry about privacy. So the job isn’t just to buy tools—it’s to build AI into experiences that feel normal, helpful, and optional.

How I map AI budgets (the boring-but-useful buckets)

When I hear “we’re investing heavily in AI,” I translate it into four buckets. This helps me see what’s actually being funded—and what’s being ignored.

- Data plumbing: cleaning data, connecting systems, permissions, governance, and making sure models can access the right inputs.

- Content ops: workflows for briefs, drafts, reviews, brand voice, localization, and version control—so AI output doesn’t become content chaos.

- Analytics: measurement, attribution, testing frameworks, and dashboards that show what AI is improving (and what it’s breaking).

- Experimentation: pilots, sandboxes, and small tests that reduce risk and create learning loops.

A small rant: tools without a definition of “good”

I’ll say it plainly: buying AI tools before defining what good looks like is just expensive optimism. Demos are designed to impress, not to fit your real workflows. If the team can’t explain the success metric, the tool becomes shelfware—or worse, it creates new work (more reviews, more fixes, more meetings).

“Which workflow gets 20% faster by Q2?” not “Which platform has the best demo?”

What I’d ask in the next budget meeting

- Which specific workflow are we speeding up, and by how much?

- What data must be reliable for that workflow to work?

- Who approves AI output, and what is the review standard?

- How will we measure impact: time saved, cost reduced, conversion lift, or churn reduction?

That’s how I bridge the awkward gap: align corporate AI spend to real customer readiness, and tie every dollar to a measurable workflow win.

3) First-Party Data is the new ‘PR’: become cite-worthy

In the leader chat, I kept hearing the same obsession: being referenced, not just ranking. That’s a real mindset flip. The goal isn’t only “show up on page one.” It’s “show up inside the answer” when an AI tool summarizes options, compares vendors, or explains a concept.

“If the model can’t quote you, you don’t exist in the conversation.”

Here’s the shift I’m making in my own AI marketing strategy for 2026: first-party data isn’t only for targeting. It’s also structured proof that AI systems can safely cite. When you publish your own benchmarks, definitions, and methodology, you give AI something concrete to reference—numbers, rules, and clear language instead of vague claims.

My go-to play: publish 3–5 “fact pages”

I’m treating these like modern PR assets. Not press releases—citation assets. Simple pages that answer one question extremely well, with clean tables and plain wording.

- Pricing logic: what drives cost, what changes price, what doesn’t

- Benchmarks: performance ranges, averages, sample sizes, time windows

- Methodology: how data was collected, cleaned, and calculated

- Definitions: the exact meaning of key terms (no fluff)

- Policy/limits: what’s included, excluded, and edge cases

Make it easy to quote (and hard to misquote)

I like to add a small “copy-ready” block that AI tools and humans can lift without rewriting. Even something as simple as:

Benchmark window: Jan–Dec 2025 | Sample size: 4,218 accounts | Median: 12.4%

And I almost always include at least one table, because tables travel well across search, AI overviews, and chat responses.

| Metric | Definition | How we calculate it |

|---|---|---|

| Activation Rate | % of new users who complete setup | Activated users / new signups (30 days) |

| Time-to-Value | Days until first key outcome | Median days to first “success event” |

Quick tangent: my “boring” glossary won

My best-performing content last year was basically a glossary… and I’m not even mad. Leaders in the interview hinted at the same thing: definitions are linkable, quotable, and reusable. In an AI-first world, that’s PR.

4) AI Mention Tracking: tempting, pricey, and… kinda messy

In the leader chat, the topic that kept coming up was the dream dashboard: “AI mentioned us!” It sounds simple—track brand mentions inside ChatGPT, Gemini, Perplexity, and whatever comes next. But the leaders were blunt: measurement is unreliable right now. Models change, answers vary by user, and “mentions” can disappear overnight. Even when a tool shows a spike, it’s hard to know if it’s real visibility or just noisy data.

“We all want the mention tracker, but we don’t trust the numbers yet.”

Why the data gets messy fast

- Prompt sensitivity: small wording changes can flip results.

- Personalization and location: two people can get different answers.

- Model updates: what ranked yesterday may vanish today.

- Attribution gaps: a mention doesn’t tell you what source the model used.

That’s why I’m cautious about the growing “AI mention tracking” market. The uncomfortable truth is that a $200M+ category can still deliver low ROI if the inputs are shaky. Paying for a polished dashboard doesn’t fix the core problem: inconsistent retrieval and inconsistent outputs.

A scrappier approach I actually trust

Instead of chasing perfect coverage, I use a controlled, repeatable routine. It’s not glamorous, but it’s stable enough to learn from.

- Sample prompts: pick 10–20 prompts your buyers would ask.

- Controlled tests: run them weekly, same wording, same order, same tools.

- Citation diary: log what the AI says, what it cites, and how it frames you.

Here are example prompts I keep in my set:

“Best [category] tools for [use case] in 2026”“Alternatives to [competitor] for [industry] teams”“What is [your brand] known for?”

My “citation diary” template

| Date | Tool/Model | Prompt | Mention? | Cited source | Notes (tone, accuracy) |

|---|---|---|---|---|---|

| 2026-02-__ | ChatGPT / Perplexity | Prompt #__ | Yes/No | URL / “none” | Short summary |

My rule from the discussion: track mentions only if you can tie them to pipeline or reputation outcomes. If you can’t connect it to leads, conversions, branded search lift, or clear narrative control, it’s just an expensive scoreboard.

5) Generative AI Agents and the ‘autonomous buyer’ moment

In the leader chat, one theme kept coming up: agentic AI. Not just “AI that writes,” but AI that acts—searches, compares, decides, and even buys. Leaders are watching these agents like hawks because it changes who the “user” is. It’s no longer only a person on a laptop. It might be a system making choices on someone’s behalf, based on rules, budgets, and preferences.

Here’s the moment that stuck with me: your next customer might be an AI shopping assistant comparing you to three competitors at 2 a.m. It won’t be impressed by clever taglines. It will look for proof, clarity, and low risk. In other words, the “autonomous buyer” moment is when the first serious evaluation is done by an agent—before a human ever sees your homepage.

What agents seem to “like” (boring wins again)

From what leaders shared (and what I’m seeing in the market), agents reward brands that are easy to parse. That means the basics matter more than ever:

- Clear specs: features, limits, compatibility, pricing tiers, and what’s included.

- Policies that are easy to find: returns, refunds, shipping, cancellation, warranties, data handling.

- FAQs that answer real questions: setup time, edge cases, support hours, “what happens if…”.

- Consistent naming: product names, plan names, and SKUs that don’t change across pages and PDFs.

- Structured content: tables, comparison pages, and clean page titles that reduce ambiguity.

I also think agents “like” content that is stable over time. If your pricing page says one thing and your sales deck says another, an agent may flag you as high risk. Humans might ask for clarification; agents may simply move on.

Where I’m optimistic (with guardrails)

I’m optimistic because agents could reduce friction for buyers. They can handle the busy work: gathering options, checking constraints, and summarizing tradeoffs. But leaders also stressed a key condition: we have to keep brand and ethics intact. If an agent is acting for a buyer, we need to be clear about claims, transparent about terms, and careful with how we personalize offers.

“The buyer journey may get shorter—but the trust journey still matters.”

6) Revenue, workflows, and the part leaders don’t say out loud

In the leader chat, the most useful comments were also the least exciting. Nobody won points for flashy demos. The real wins came from one simple idea: AI helps when it’s glued into the workflow. Not “AI as a side project,” not “AI as a brainstorm toy,” but AI placed directly inside the steps we already repeat every week—research, drafting, approvals, reporting, and follow-up.

The part leaders don’t always say out loud is this: revenue doesn’t move because you “adopted AI.” Revenue moves when AI reduces friction between decisions and execution. In the interview, I kept hearing versions of the same truth: if the tool lives outside the system (outside the CRM, outside the project board, outside the content pipeline), it becomes one more tab to ignore. If it lives inside the system, it becomes a habit.

I also left the conversation more convinced that sales + marketing must run AI together. The teams reporting stronger revenue growth weren’t doing siloed pilots. They were running joint experiments: one shared goal, one shared dataset, one shared definition of “good.” That means I’m planning tests where marketing uses AI to tighten targeting and messaging, while sales uses AI to speed up follow-ups and improve call notes—then we measure the combined impact on pipeline, not just clicks.

To keep myself honest, I’m using a simple quarterly checklist. Every quarter, I want: one AI-assisted research sprint (to learn faster), one creative iteration loop (to ship better work), and one reporting automation (to save time). If I can’t point to those three outcomes, then I’m probably just collecting tools instead of building a system.

Here’s the analogy that stuck with me, and it’s how I want to end these notes. Treat AI like a sous-chef. A sous-chef can prep ingredients, suggest techniques, and speed up the kitchen. But you still need a head chef, a menu, and someone tasting the sauce. In marketing terms, we still need strategy, clear positioning, and human judgment. AI can help us move faster, but it can’t decide what matters. That part is still on us—and that’s exactly where leaders earn their keep.

TL;DR: AI Marketing Trends in 2026 are less about shiny tools and more about ecosystems: AI search reduces clicks, first-party data becomes citation fuel, and agentic AI changes how buyers choose. Invest in clarity, measurement, and brand visibility where AI answers live.

Comments

Post a Comment