AI Marketing Strategy: A Messy, Practical Rollout

The first time I “implemented AI” in marketing, it was basically a fancy spreadsheet plus a chatbot plug-in I didn’t trust. It didn’t fail because the model was dumb—it failed because our data was a junk drawer and nobody owned the process. This guide is the version I wish I’d had: a little opinionated, a little scrappy, and designed for the way teams actually work.

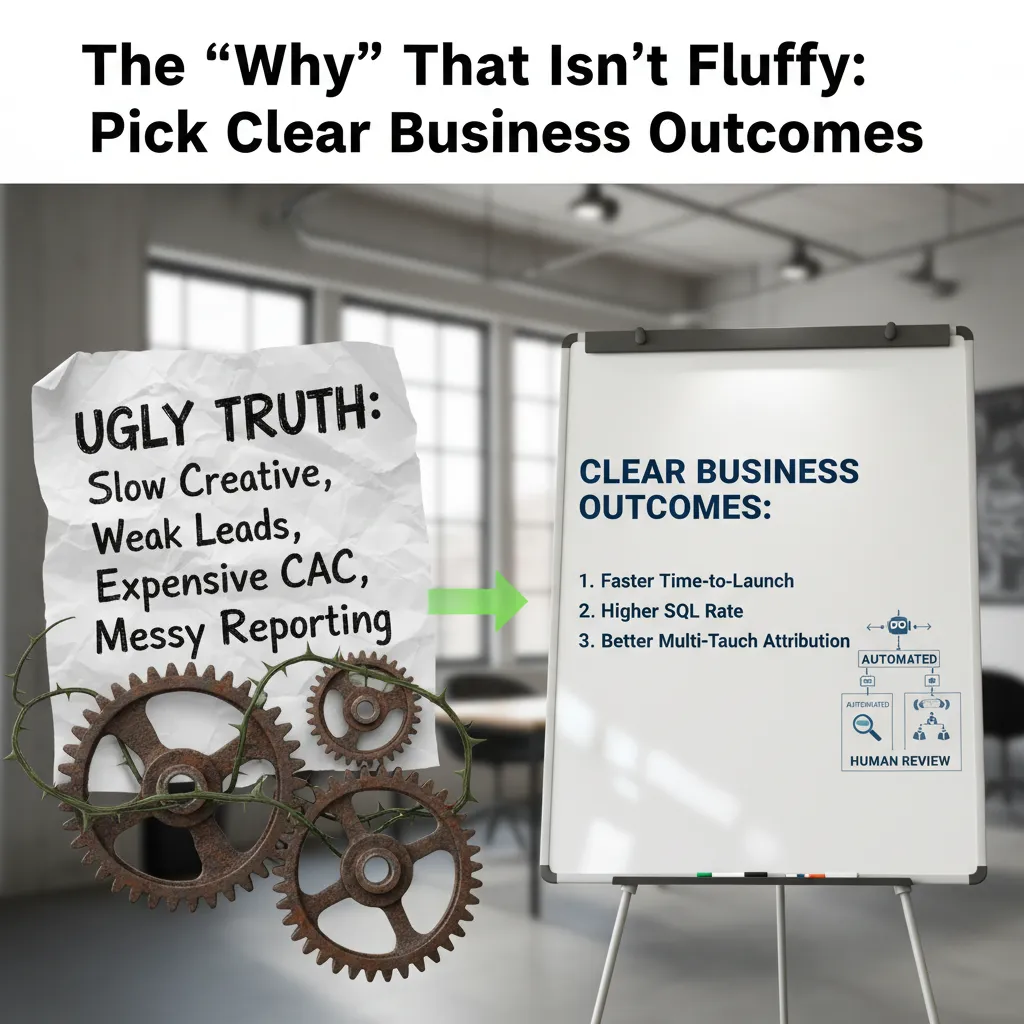

1) The “Why” That Isn’t Fluffy: Pick Clear Business Outcomes

When I roll out AI in marketing, I don’t start with tools. I start with one ugly truth: what’s actually broken. Not “we want to be innovative.” I mean the real stuff that makes my week harder—slow creative, weak lead quality, expensive CAC, and messy reporting. This step sounds simple, but it’s the difference between an AI marketing strategy that helps and one that becomes a side project nobody trusts.

Write the pain in plain language

I literally write a short list like this:

Creative takes 10 days to ship, so we miss windows.

Leads look good in volume, but SQL rate is low.

CAC keeps rising, and we can’t explain why.

Reporting is manual, inconsistent, and late.

Translate pain into business outcomes (not AI tasks)

Then I translate each pain into a clear outcome. This is straight out of any solid “how to implement AI in marketing” step-by-step guide: tie AI to measurable goals, not features.

Slow creative → faster time-to-launch (ex: 10 days → 5 days)

Weak lead quality → higher SQL rate (ex: +20% SQLs from same spend)

Expensive CAC → lower CAC via better targeting and testing

Messy reporting → better multi-touch attribution and cleaner dashboards

A quick gut-check: automate vs. human review

Before I automate anything, I do a fast check:

Can AI draft it? (yes for copy variants, summaries, tagging)

Can AI decide it? (sometimes for routing/scoring, with guardrails)

What must be reviewed by a human? (pricing, promos, legal claims, brand voice)

I learned this the hard way the week I tried to automate everything and accidentally sent two conflicting promos to the same segment. Support tickets spiked. Sales was not amused. Never again.

Define success before tools

Only after outcomes are clear do I define success metrics: ROI measurement (incremental lift, not vibes), pipeline impact (MQL→SQL→opportunity movement), and campaign optimization targets (faster testing cycles, better CTR/CVR, fewer wasted sends). If I can’t measure it, I don’t automate it.

2) Key Market Trends (2026) That Actually Change Your Plan

When I use the “How to Implement AI in Marketing: Step-by-Step Guide” approach, I don’t start with tools—I start with the market shifts that force new workflows. In 2026, a few trends aren’t just interesting; they change how I plan campaigns, data, and approvals.

Real-time personalization is moving from segments to individuals

The biggest shift I’m watching is real-time personalization at the individual level. Hyper-personalization is becoming the norm because customers now expect messages that match their behavior right now, not last week. That means my AI marketing strategy needs cleaner event tracking, faster content production, and rules for what we will not personalize (pricing, sensitive categories, anything that feels creepy).

Autonomous campaign optimization: powerful, but risky

I’m excited about autonomous campaign optimization—systems that adjust bids, audiences, creative rotation, and timing without waiting for me. But it can wreck a brand voice if I don’t set guardrails. If the model only chases CTR, it may drift into clickbait, over-promise, or tone that doesn’t match our brand.

My rule: let AI optimize performance, but humans own meaning.

“Agentic tools” are becoming default operations

My 2026 marketing prediction: agentic tools and business automation are creeping from experiments into defaults. Instead of “AI helps me write,” it’s “AI runs the workflow”: pulling reports, creating briefs, generating variants, launching tests, and escalating exceptions. This maps directly to the step-by-step implementation mindset: define the task, define the data, define the approval path, then automate.

A small tangent: my “trend quarantine” doc

I keep a simple doc called Trend Quarantine. When a shiny new AI marketing tool shows up, I log it with:

Problem it solves (in one sentence)

Data it needs (and privacy risk)

Time to test (hours, not vibes)

Success metric (what “better” means)

This stops trends from hijacking my quarter.

How these trends map to workstreams

Content creation: modular copy blocks + on-brand variant generation for different contexts.

Behavioral triggers: event-based journeys (browse, abandon, repeat purchase) with real-time decisioning.

Dynamic A/B testing: always-on experiments where AI proposes variants, but I approve voice and claims.

3) Implementing AI Starts With Clean Data (Not Tool Shopping)

When I see teams rush to buy an “AI marketing platform,” I slow things down. In my experience, implementing AI in marketing works best when I treat data cleanup like cleaning out a fridge: what’s expired, what’s duplicated, and what’s missing labels. If the inputs are messy, the outputs will be confident nonsense.

Audit Data Quality Like a Fridge Cleanout

I start with a simple audit across CRM, marketing automation, analytics, and ad platforms. I’m looking for:

Expired: old lifecycle stages, dead leads, outdated company info

Duplicated: multiple records for the same person/account, repeated events

Missing labels: blank job titles, “unknown” sources, inconsistent campaign names

If I can’t trust basic fields, I don’t let an AI model “learn” from them.

Set Basic Data Governance (Keep It Lightweight)

I don’t build a huge committee. I define a minimum governance framework so the data stays clean after the first sweep:

Owners: who is responsible for each system and key fields

Definitions: what “MQL,” “SQL,” “pipeline,” and “conversion” mean (one version)

Retention: how long we keep events, leads, and consent records

Access: who can edit, export, and connect tools to the data

Minimum Viable Dataset for Lead Scoring and Prediction

For predictive analytics and lead scoring, I focus on the smallest dataset that still gives signal. Typically, that’s:

Job title (and seniority)

Firmographics: company size, industry, region

Behavior: key page views, form fills, email engagement, demo intent

I’d rather have three clean inputs than thirty noisy ones.

Human Review for Anything Customer-Facing

Even with clean data, I set rules for where humans must review AI output—especially for:

Brand claims and competitive statements

Pricing and promotions

Regulated copy (health, finance, legal, privacy)

My rule: if it can create risk or confusion, it gets a human checkpoint.

Quick Win: Fix UTM Discipline So Attribution Isn’t Guesswork

The fastest improvement I make is tightening UTMs and naming. I standardize a simple convention and enforce it in templates:

utm_source=linkedin&utm_medium=paid_social&utm_campaign=2026q1_product_launch&utm_content=video_a

Once UTMs are consistent, multi-touch attribution stops being a debate and starts being a report.

4) Best AI Tools (Without the ‘Tool Graveyard’ Problem)

I’ve learned the hard way that “more tools” usually means “more tabs,” not more results. So my rule is simple: pick 1–2 AI marketing tools for a pilot use case before buying a full stack. This matches the step-by-step approach from How to Implement AI in Marketing: start small, prove value, then scale.

“If a tool doesn’t map to a real job this month, it goes on the ‘not now’ list.”

Shortlist tools by the job-to-be-done (not by hype)

When I evaluate AI tools, I start with the work we already do every week. Then I ask: where is the bottleneck, and can AI remove it without creating new risk?

Content creation: draft outlines, ad variations, email subject lines, SEO briefs (I still keep brand voice rules in a shared doc).

Marketing automation: routing leads, tagging intent signals, summarizing calls, and triggering follow-ups.

Predictive lead scoring: ranking leads/accounts using firmographics + behavior, then validating against sales feedback.

Campaign optimization: budget pacing, channel mix suggestions, and performance anomaly alerts.

ABM: Account selection + buying committee mapping (without being creepy)

AI can help in ABM by spotting patterns across your CRM, website visits, and engagement history. I use it to prioritize accounts and map likely roles (economic buyer, champion, technical evaluator). The line I don’t cross: I avoid messaging that implies we “know” personal details. Instead, I keep it practical: industry pain points, role-based value, and clear opt-outs.

Dynamic A/B testing: AI suggests, humans approve

I like using AI to propose variants fast, especially for ads and landing pages. But I treat a human editor as the bouncer. AI can generate 20 options; I only ship the 2–4 that match our claims, compliance rules, and tone.

Model suggests variants (headline, CTA, offer framing).

Human checks accuracy and brand fit.

Run test with clear success metric (CTR, CVR, CAC).

Budget allocation: protect core spend with a “learning budget”

To avoid random tool sprawl, I reserve a small line item for experiments. I literally label it AI_learning_budget. That way, tests don’t cannibalize proven campaigns, and I can stop experiments quickly if they don’t hit agreed targets.

5) Pilot Use Case: The Small Bet That Proves ROI

When I roll out an AI marketing strategy, I don’t start with a big, risky rebuild. I start with a pilot that has a clear upside and a low downside. Two options usually work best: AI lead scoring for inbound leads (so sales spends time on the right people) or real-time personalization for one lifecycle segment (like trial users, repeat buyers, or churn-risk customers). The goal is simple: prove value fast without breaking anything important.

Pick one narrow use case (not five)

I keep the pilot tight on purpose. One audience segment, one offer, and one main channel path. For example, inbound lead scoring is a great “small bet” because you can run it alongside your current process. If the model is wrong, you still have your old scoring rules as a backup.

Lead scoring pilot: prioritize inbound demo requests using AI signals + your existing CRM fields.

Personalization pilot: change website or email content for one segment based on behavior (pages viewed, product interest, time on site).

Define success metrics before you touch the model

I write the success metrics down first, because otherwise the pilot turns into opinions. Common metrics I use:

Lift in MQL → SQL conversion rate

Lower CPL (cost per lead) from paid campaigns

Faster content cycle time (brief-to-publish)

Improved ROI measurement (cleaner attribution and reporting)

Run it like a science project (yes, really)

I treat the pilot like an experiment. That means a control group, a holdout, and documented hypotheses. I’ll literally write something like:

Hypothesis: AI lead scoring will increase SQL rate by 15% without increasing CPL.

Then I set up a holdout group that does not get the AI treatment. If I can’t compare outcomes, I can’t claim ROI.

Orchestrate the campaign across channels

Even a small pilot should connect the journey. I like a simple orchestration: email + paid retargeting + site, triggered by behavior.

Visited pricing page → retargeting ad + “compare options” email

Downloaded guide → personalized site banner + nurture sequence

The imperfect moment I learned the hard way

I once called a pilot “done” after two weeks because early numbers looked great. That was a mistake. The real test is at least one full buying cycle, so you can see lead quality, sales follow-up, and revenue impact—not just clicks.

6) Scale-Up: Autonomous Campaign Optimization With Guardrails

Once my pilot proves it can lift results without breaking trust, I don’t “turn on AI everywhere.” I scale in layers: first I add more segments, then I expand to more channels, and only then do I allow more autonomy. This step-by-step approach (the heart of any how to implement AI in marketing plan) keeps the rollout messy but safe, because each layer gives me new data, new edge cases, and new chances to tighten the rules.

Where autonomous optimization helps (and where it doesn’t)

AI shines when the job is fast, repetitive, and measurable. I let it handle bidding, budget pacing, and variant rotation across ads and emails, because those decisions benefit from constant testing and quick adjustment. But I keep a human in the loop for anything that can create brand or legal risk. That means humans approve claims, tone, and targeting exclusions (like sensitive categories, competitor terms, or audiences we never want to reach). In practice, I treat AI like an optimizer, not a spokesperson.

Personalization at scale without the “creepy” factor

As I scale personalization, I aim for helpful context, not surveillance vibes. I’m comfortable using signals like the page someone is on, the product category they browsed, or their lifecycle stage. I avoid messaging that implies we know too much, even if we technically do. If a line would make a customer say, “How did they know that?” I rewrite it. The goal is relevance that feels like good service, not being watched.

Governance: the guardrails that make autonomy possible

To scale safely, I build a simple governance framework: clear approval flows for new templates and offers, model monitoring for drift and odd spikes, an incident response plan for mistakes, and vendor accountability for data handling and model behavior. I also document what the system can and cannot do, so “autonomous” never means “unowned.”

Training the team to think like analysts

Finally, I train marketers to read model outputs the way we read analytics—skeptically, but constructively. We ask: What changed? What’s the evidence? What’s the risk? That mindset is my real scale-up strategy, because tools evolve, but good judgment is what keeps AI marketing strategy effective over time.

TL;DR: Start with 1–2 low-risk, high-impact AI marketing tools (think lead scoring and content creation). Fix data quality, run a tight pilot, measure ROI over 6–12 months, then scale with governance, human review, and cross-functional support—especially for real-time personalization and autonomous campaign optimization.

Comments

Post a Comment